The CVE Researcher is a multi-agent AI pipeline that automates vulnerability research, detection template generation, and exploitation analysis. Built on Google’s Agent Development Kit (ADK), it coordinates specialized AI models through four phases — deep research, technology reconnaissance, actor-critic template generation, and exploitation analysis — to produce production-ready Nuclei detection templates overnight.

Beyond Simple Automation

Multi-agent AI systems are transforming how security teams research and respond to new CVEs. In Part 1 of this series¹, we introduced the CVE Researcher and its impact on vulnerability management operations. This post explores the technical architecture that makes automated CVE research possible: a multi-agent orchestration system built on Google’s Agent Development Kit (ADK) that coordinates specialized AI models through a structured research pipeline.

The system demonstrates how modern AI architectures can tackle complex, multi-step security workflows that previously required significant human expertise.

The CVE Researcher Pipeline

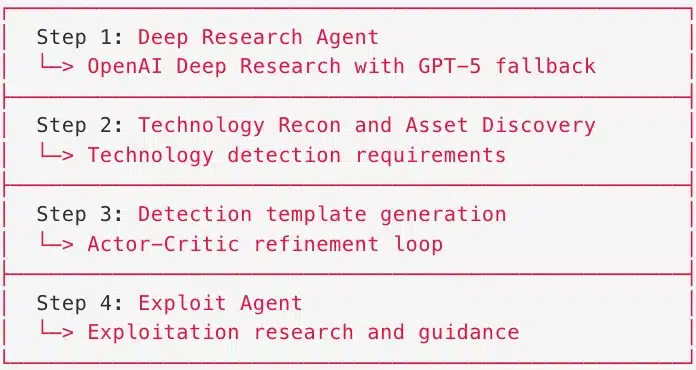

The CVE Researcher consists of four distinct phases, each utilizing one or more specialized agents. Rather than relying on a single large language model to handle everything, the pipeline decomposes the problem into discrete tasks suited to different model capabilities.

Exhibit 1 - CVE Researcher Pipeline Architecture

This decomposition allows each agent to use models optimized for its specific task. Research benefits from models with web search capabilities. Template generation benefits from models trained on code. Evaluation benefits from models with strong reasoning abilities.

How Does the Deep Research Phase Work?

The Research Agent represents the pipeline’s information gathering phase. It uses OpenAI’s deep research model (o4-mini-deep-research) with web search capabilities to synthesize vulnerability information from multiple sources: NVD entries, vendor advisories, security researcher publications, and proof-of-concept repositories.

Research queries can take 10-15 minutes as the model iteratively searches, reads, and synthesizes information. To handle cases where the primary model times out or encounters rate limits, the system implements automatic fallback to GPT-5 with reasoning capabilities. This fallback uses a shorter timeout window and reduced tool call limits, trading thoroughness for reliability.

The output is a comprehensive research report containing: – Vulnerability summary and technical details – Affected products and version information – Known exploitation techniques – Detection indicators and remediation guidance

Technology Reconnaissance and Asset Correlation

Raw CVE data rarely maps cleanly to organizational assets. The CVE Tech Recon Agent bridges this gap by extracting structured technology detection requirements from the research output. It identifies:

- Primary affected technologies (e.g., “Apache Log4j 2.x”)

- Supporting technologies that may indicate exposure

- Vulnerable configurations and deployment patterns

- Technical indicators for fingerprinting

The Praetorian Guard Tech Recon Tool then correlates these requirements against actual organizational assets through a four-phase process:

- Discovery: Query the Praetorian Guard platform² for technologies deployed across client environments

- Correlation: Match affected CVE technologies against discovered assets

- Extraction: Parse vendor and product identifiers from CPE (Common Platform Enumeration) strings for targeted searching

- Integration: Search existing Nuclei template repositories for proven detection patterns

This phase answers the critical question: “Which of our assets might be vulnerable to this CVE?”

How Does Actor-Critic Template Generation Work?

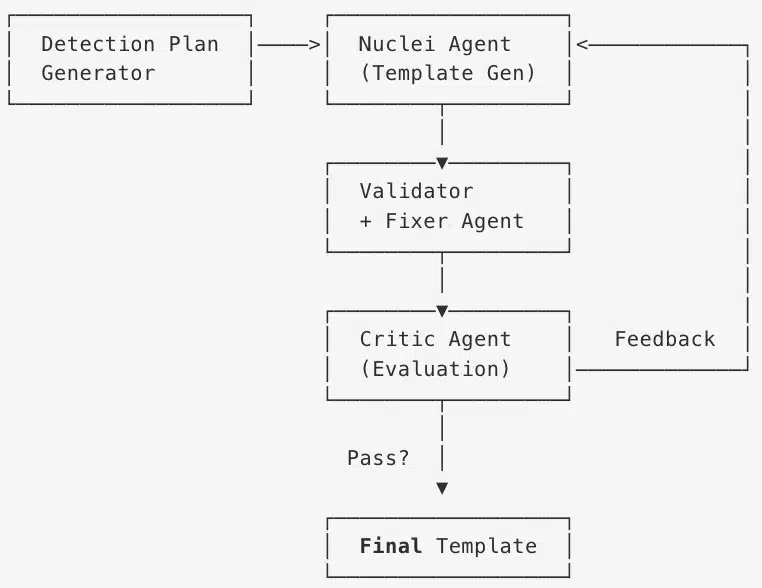

The vulnerability detection template generation phase is the most sophisticated component of the pipeline: it uses a collection of sub-agents together with an iterative actor-critic loop for generating and refining Nuclei detection templates.

The process begins with the Detection Plan Generator Agent, which creates a structured detection strategy based on the research findings. This plan specifies: – HTTP request patterns to test – Response indicators to match – False positive mitigation strategies

The Nuclei Detection Agent then generates initial templates following this plan. Rather than accepting the first output, the system validates each template through multiple steps:

- Syntax Validation: Verify YAML structure and Nuclei schema compliance

- Template Fixer Agent: AI-powered correction of syntax errors, schema violations, and structural issues

- Re-validation: Confirm fixes resolved identified issues

- Security Intelligence Extraction: Analyze templates from an offensive perspective

The Critic Agent evaluates outputs and provides feedback for iteration. This actor-critic pattern, borrowed from reinforcement learning, enables the system to progressively improve template quality rather than accepting potentially flawed initial generations.

Exhibit 2 - Actor-Critic Refinement Loop

The feedback loop is critical. When the Critic Agent identifies issues with a generated template, it provides specific feedback that flows back to the Nuclei Agent for regeneration. This continues until the template passes validation or reaches the maximum iteration limit. In practice, most templates require 2-3 iterations before achieving production quality.

Exploitation Research Agent

The Exploit Agent represents the final analytical phase of the pipeline. While the Detection Agent focuses on identifying vulnerable systems, the Exploit Agent examines the vulnerability from an offensive perspective.

This agent analyzes: – Attack vectors: How an adversary would realistically exploit this vulnerability – Exploitation techniques: Specific methods, payloads, and tooling relevant to the CVE – Impact assessment: What an attacker gains from successful exploitation – Chaining potential: How this vulnerability could combine with others in an attack chain

The Exploit Agent draws from the comprehensive research report and produces exploitation guidance that helps security teams understand the real-world risk. This offensive perspective ensures that detection templates target the actual exploitation patterns adversaries would use, not just theoretical indicators.

Production Integration: Work Done While You Sleep

The pipeline does not stop at template generation. For network-critical vulnerabilities (those with network attack vectors and CVSS scores above 9.0), the system automatically:

- Creates pull requests to the Praetorian Nuclei templates repository

- Generates tickets for human review and approval

- Copies artifacts to standardized output directories for downstream consumption

The result: when a critical vulnerability drops overnight, security engineers arrive in the morning to find the groundwork already laid. The research and exploit reports are complete. The detection templates are generated and validated. The PR is open and ready for review. The ticket is waiting with all the context they need.

This changes how security teams spend their time. Instead of grinding through repetitive research and template authoring, engineers can focus on higher-value work: applying domain expertise to customize detections for their specific environment, conducting deeper threat analysis, investigating edge cases the automation might miss, and pursuing proactive security initiatives that typically get deprioritized when teams are buried in reactive tasks. The pipeline handles the volume; humans provide the judgment, creativity, and contextual understanding that only experienced practitioners can deliver.

Why Use Multiple AI Models?

The pipeline leverages models from OpenAI, Google, and Anthropic, selecting the right tool for each task. Research phases benefit from models with web search capabilities. Code generation tasks leverage models with strong technical training. Evaluation and critique phases use models optimized for reasoning. Each agent can also fall back to alternative models when primary options encounter rate limits or timeouts, ensuring pipeline reliability even under heavy load. This multi-provider approach avoids vendor lock-in while optimizing for cost, speed, and capability across the workflow.

Lessons Learned

Building the CVE Researcher revealed several insights applicable to complex AI automation:

Decomposition matters: Breaking the problem into specialized agents outperformed monolithic approaches. Each agent can be optimized, tested, and improved independently.

Fallbacks are essential: Production systems encounter rate limits, timeouts, and model degradation. Graceful fallback to alternative models maintains pipeline reliability.

Iteration beats generation: The actor-critic loop consistently produces higher quality templates than single-shot generation. The additional latency is worthwhile for production-grade outputs.

Human oversight remains critical: Automation handles volume and velocity. Humans handle judgment calls about deployment priorities and remediation strategies.

Conclusion

The CVE Researcher demonstrates how multi-agent AI systems can tackle complex security workflows. By decomposing vulnerability research into specialized phases, coordinating multiple AI models, and implementing iterative refinement, the pipeline achieves automation that augments rather than replaces human expertise.

Organizations interested in exploring how AI can enhance their vulnerability management programs can learn more about Praetorian’s approach through our Praetorian Guard platform² or by contacting our team³.

Frequently Asked Questions

What is the CVE Researcher?

The CVE Researcher is a multi-agent AI pipeline built by Praetorian that automates the end-to-end process of vulnerability research — from gathering intelligence about a new CVE to generating validated Nuclei detection templates and exploitation guidance. It runs overnight so security teams arrive to completed research and ready-to-review pull requests.

How does the actor-critic loop improve detection template quality?

Rather than accepting the first AI-generated template, the pipeline passes each template through a validation-feedback cycle. A Critic Agent evaluates the output and provides specific feedback to the Nuclei Agent for regeneration. This iterative refinement, borrowed from reinforcement learning, typically requires 2-3 iterations before a template reaches production quality — catching syntax errors, schema violations, and false positive risks that single-shot generation would miss.

Which AI models does the pipeline use?

The pipeline uses models from OpenAI, Google, and Anthropic, selecting the best fit for each task. Research phases use models with web search capabilities (like OpenAI’s deep research model). Code generation leverages models with strong technical training. Evaluation and critique use models optimized for reasoning. Each agent also has fallback models to ensure reliability under rate limits or timeouts.

Does the CVE Researcher replace human security analysts?

No. The pipeline automates the high-volume, repetitive aspects of CVE research — gathering intelligence, generating initial detection templates, and synthesizing exploitation guidance. Human engineers still review all outputs, customize detections for their specific environment, make deployment decisions, and conduct deeper threat analysis that requires contextual judgment only experienced practitioners can provide.

What triggers the CVE Researcher pipeline?

The pipeline runs automatically when new critical vulnerabilities are published — particularly those with network attack vectors and CVSS scores above 9.0. It can also be triggered manually for any CVE that requires investigation.

References: 1. Part 1: AI-Powered CVE Research: Winning the Race Against Emerging Vulnerabilities (link to Part 1) 2. Praetorian Guard – Continuous Attack Surface Management 3. Contact Praetorian