In a perfect world, all organizations would incorporate security into their cloud environments from the start. Unfortunately, common development practices tend to postpone the implementation of security controls in the product environment in favor of shipping product features.

The reasons for this are manifold: an early-stage product may ignore robust security processes in favor of speed, but later fail to build robust security controls after achieving maturity. Early-stage products may have much fewer resources for implementing security controls, then have to resolve insecure designs when the organization is more mature. However, retroactively building security controls into existing systems to counter this tech debt can be difficult and introduce friction into developer workflows. In this blog post, we will outline how we helped design security controls and observability features for Google Cloud IAM in a client’s GCP environment. We also generated two technical artifacts for implementing these solutions: ephemeral-iam, which implements a token-based privilege escalation tool, and a technical guide to using Trusted Platform Modules (TPMs) for service account credential storage.

Principle of Least Privilege in Google Cloud Platform

It can be convenient to assume that engineers in an organization will always operate with the best intentions and complete their day-to-day tasks without error, but this is not the case. Verizon’s 2020 Data Breach Investigation Report found that 30% of data breaches involved internal actors. Additionally, compromised developer account credentials is a real threat that should be considered when determining what access to grant engineers in an environment. More access results in greater risk and attack surface for adversaries.

It can be difficult to define exactly what permissions someone needs when working with complex access control systems such as GCP’s Cloud IAM. This task becomes even harder in an existing environment, where permissions changes can disrupt developer workflows and the environment itself.

Google Cloud IAM

In GCP, instead of binding roles to a member to define what access they have, users attach IAM Policies to resources. IAM Policies define who has what type of access to the resource they are attached to by binding members to a role on the resource (where roles are a defined set of individual permissions). For example, if I want to give User A the ability to list objects in a Cloud Storage bucket, I would create an IAM Policy that binds User A to the Storage Object Viewer role and attach it to the bucket.

These IAM Policies can be set at any level in the GCP resource hierarchy (organization level, folder level, project level, resource level), and are transitively inherited by descendant resources. This decentralization makes it difficult to enumerate all possible permissions granted to a member. Additionally, there are numerous ways for members to assume other members’ identities (e.g. service account impersonation), and permissions can be easily assigned across projects provided that the appropriate organization policy is disabled. For an organization with many projects, the number of all possible IAM permissions increases drastically.

Problem Statement

The decision to investigate privileged identities in GCP was motivated by several factors.

Informal Standard Operating Procedures

Ideally, engineers would be granted a minimal set of permissions required to complete everyday tasks and any operation that requires elevated privileges would be handled via a separate, well-defined process. However, some organizations do not have formal policies for this escalation process, and the process often devolved into undocumented, persistent elevated privileges.

Overly Permissive User Accounts

In many cases, IAM privileges can become extremely confusing, especially for users who are not familiar with GCP. As a result, many development users will grant themselves Editor roles at the project or organization level. Unfortunately, the Google IAM documentation notes that these “primitive” roles are granted the ability to modify every resource in the project, substantially increasing their risk. Administrators should shy away from primitive roles and default service account identities, which rely on insecure defaults and are extremely privileged. Built-in tools such as IAM Recommender and IAM-relevant organization policies can centralize control and minimize insecure defaults with basic IAM permissions.

Limited Visibility Around Existing State of IAM

Organizations typically do not have a strong understanding of their IAM landscape. While some users may manage project or organization permissions centrally in an infrastructure as code tool like Terraform, it is all too easy for frustrated administrators to grant themselves permissions to a particular resource. Over time, these actions generate a complex state for which it becomes difficult to track excessive roles. It is often difficult for one user to know exactly what they can do against a particular resource without having to enumerate all permissions against all resources.

Design Considerations

When designing for engineers in a large GCP organization to escalate privileges, Praetorian focused on the following heuristics:

- Limiting Compromise Impact – In a large environment, the compromise of an individual component is inevitable. From an architectural perspective, systems for privileged access should enforce processes for limiting the breadth and scope of intrusion. In the event of a compromised credential, responders want to prevent key exfiltration, terminate privileged access, and investigate activity. Subjects should only obtain permissions for which a business need exists and manage them centrally to account for drift.

- Auditability and Observability — Praetorian wanted to collect contextual information about the reasons why users need to request break-glass permissions. Having the ability to understand who requested which permissions and their subsequent actions significantly decreases mean time to detection if an account were compromised.

- Limiting Privileged Accounts — Praetorian discussed solutions that involved the deployment of infrastructure that could be used by users to assume privileged permissions. This required attaching privileged service accounts to resources in the environment. Praetorian wanted to refrain from deploying privileged services if possible.

- Session Length Restrictions — Praetorian wanted to enforce restrictions on the length of “privileged” sessions. If these permissions lasted forever, then there would be no real boundary between a user’s default permissions and the set of “break-glass” permissions.

Additionally, Praetorian wanted to recommend solutions which didn’t add unnecessary management overheard. Oftentimes, adding friction to limit the negative downstream affects of a compromised account may generate decreased usability for end users. In this case, Praetorian expected the system to handle an engineering team of roughly a hundred users who required privileged access for management and debugging purposes. Praetorian wanted a system that could quickly onboard new users and iterate on privileged permission sets.

Strategy 1 – Token Impersonation

An initial strategy was to allow users to impersonate particular service accounts with elevated privileges. Privileged permission sets would be granted to service accounts; those permissions would then be accessible to users via the `iam.serviceAccounts.getAccessToken` permission.

If a user had the `iam.serviceAccounts.getAccessToken` permission and needed to escalate to a privileged service account, they would be able to create a short-lived session token for that service account using the `projects.serviceAccounts.generateAccessToken` method. The default maximum lifetime of tokens generated via the `generateAccessToken` permission is 1 hour and can be extended up to 12 hours through the `iam.allowServiceAccountCredentialLifetimeExtension` constraint.

The benefit of this approach is in its simplicity. In this case, Praetorian deployed a custom solution for a client, ephemeral-iam, a CLI tool which takes the functionality of GCP service account impersonation and adds observability and access control features. Using ephemeral-iam, engineers are required to rationalize their need to escalate their privileges by providing a “reason”. The reason that they provide is then appended to all Stackdriver audit logs associated with that privileged session. This allows for alerts to be triggered when a token is generated for a service account without the required reason field with the correct signature generated by ephemeral-iam. Additionally, service account tokens are restricted to a ten minute lifespan instead of the 1 hour default, reducing the timeframe that an attacker has to compromise the credentials.

Additionally, administrators would have to manage impersonation privileges throughout their organization. Service Account impersonation is typically not a meaningful permission boundary. Users with service account impersonation rights effectively have the ability to act as that service account or user. The administrators of these accounts must also have broad IAM access to onboard users with these permissions. If those users are compromised, a user could simply exfiltrate the user credentials and call the `iam.serviceAccounts.generateAccessToken` method directly to create longer-duration access tokens.

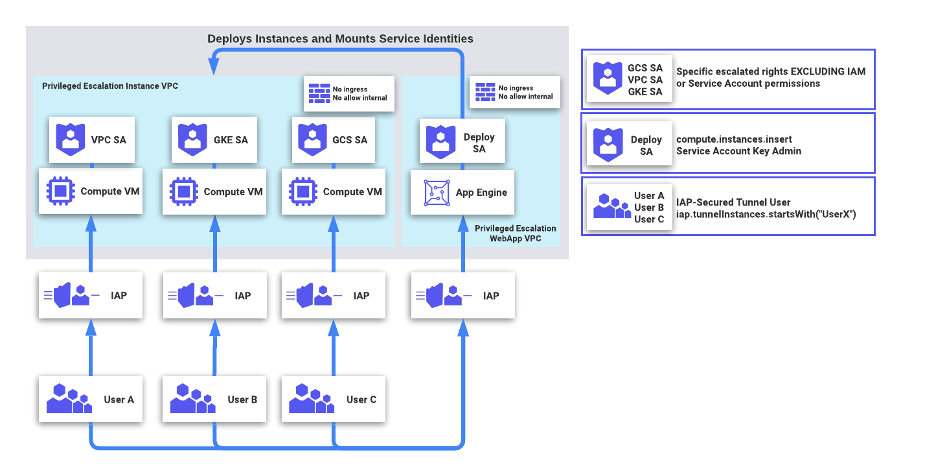

Strategy 2 – Service-bound Token Impersonation

A second solution involved generating service-bound tokens for users by mounting identities onto Google Compute Instances. A self-service escalation system could look like the following:

Here, users do not have arbitrary service account impersonation credentials. Instead, Users navigate to an API on an App Engine instance requesting a particular service account for which they have access. Each instance would begin with the specific user’s name and mount the particular service account identity for which they requested. On the IAM side, users would be configured to have the IAP-Secured Tunnel User role with an IAM condition restricting IAP Tunnel instances only to hosts where the name began with the name of the user. Instances would be automatically terminated after a set period of time.

In order to limit the risk of exfiltration, an administrator can conduct one of the following:

First, administrators can establish separate VPC Service Control perimeters that block access to all Google APIs for the project containing sensitive compute or data resources and the project containing the instances for privilege escalation, then set up a VPC Service Control bridge between the two perimeters so that the privilege escalation project can call APIs against the target project. This mitigates the possibility of token exfiltration, but requires an organization to set up access policies in Access Context Manager to allowlist requests from individual users. The management of these policies generates potential overhead and is difficult to centralize, especially if users do not have BeyondCorp Enterprise.

One benefit of this approach is that user requests must come from specific ranges of Compute IP addresses. Engineers can generate detective controls to immediately alert on unauthorized utilization of privileged accounts as a sign of data exfiltration. Additionally, no account requires the ability to modify arbitrary IAM permissions – to onboard a user, one only has to grant that user the ability to SSH into a particular kind of instance.

One cost to this approach is the level of management required. Even though instances stay alive for a short period of time, engineering effort is required to manage backend scripts that deploy Compute instances. Another cost is that any additional segmentation between users and privileged operations generates some level of friction. Engineering teams should ensure that the level of additional friction is commensurate with the organization’s security requirements.

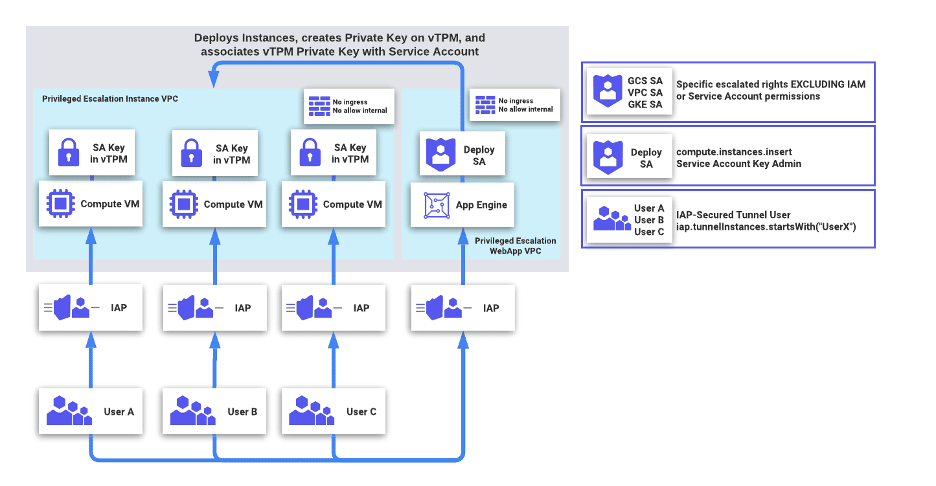

Strategy 3 – Service Account Keys Stored in vTPMs

A third strategy involved generating service account private keys in Shielded VMs’ vTPMs and subsequently signing JWTs for users to authenticate against GCP APIs. When implemented correctly, this strategy can enforce that new valid JWTs can only be generated on the Compute VM that the service account key has been stored. This is much more experimental than the previous two which, in turn, makes it more susceptible to flaws due to oversight. While we do suggest further testing for an interested organization, we have outlined what a proof-of-concept implementation of this strategy could look like below.

Virtual Trusted Platform Modules (vTPMs) are trusted platform modules that can be used to protect secrets used during instance runtime. Typically implemented as a specialized chipset isolated from the primary instance, vTPMs mitigate the risk of key or secret exfiltration even if an attacker achieved root access on an instance. Currently, vTPMs are used primarily for Shielded VMs and Shielded Nodes in GCP products.

Praetorian considered a similar strategy to Strategy 2, albeit using vTPMs as opposed to mounted instance identities. A sample architecture diagram is attached below:

Here, the management App Engine instance only has the ability to directly upload service account keys to a service account. The application would conduct the following actions:

- Create a new keypair in memory and associate the public key with a service account with the

gcloud beta iam service-accounts keys uploadcommand. - Deploy a compute instance and retrieve its endorsement public key with

gcloud compute instances get-shielded-identity $INSTANCE_NAME --format="value(encryptionKey.ekPub)". - Seal the private key for the service account with the endorsement public key.

- Copy the sealed blob into the Compute instance, where the vTPM will unseal the private key when a user needs to sign JWTs.

Like before, users would utilize IAP tunnels over SSH to authenticate to their assigned GCE instance. This time, if their account were compromised, an attacker would not be able to exfiltrate the certificate private key off of the vTPM. However, users would have to conduct the following to authenticate to the GCP API:

- Build a Service Account JWT that corresponds with the service account to be used. Details about the specific GCP JWT format can be found here.

- Sign that Service Account JWT with the private key that was uploaded using Go-TPM.

- Make an authenticated request, using the signed JWT as the relevant Bearer token to the GCP APIs.

For those interested in what this interaction looks like in practice, a detailed step-by-step technical outline can be found in this repository.

This solution avoids the complexity around managing different Service Control Policies, while maintaining a level of distance between users and long-term tokens. However, certain limitations may still exist. Users would have to utilize custom scripts which interface with the vTPM and allow developers to seamlessly generate and sign authorized JWTs. The App Engine script would also have to be protected against cross-tenant attacks. Finally, no more than ten people could impersonate a service account concurrently, given the built-in limits to user-managed service account keys. Additional users would require additional service accounts.

Conclusion

These solutions for managing privileged accounts highlight both the possibilities available to mitigate the risk of compromised developer accounts as well its complexity. Oftentimes, solutions that provide minimal exposure to user accounts may generate friction for individual employees and additional maintenance for operators. While organizations with strict requirements can construct infrastructure to enforce short-lived tokens with strict restrictions, they should take care to conduct independent threat models for different environments and balance user trust against security requirements. With careful planning, GCP customers can carefully balance their security and usability requirements in order to manage large teams of engineers in the cloud.

If any of this sounds interesting, our team at Praetorian is currently looking for engineers who are interested in tackling complex cloud architecture problems, whether for offensive operations, incident response, or defensive enablement. We’re always excited to push a field further and look for the next generation of secure cloud infrastructure. Please visit https://www.praetorian.com/company/careers/ if you’re interested in working with us!