A few years ago, I read the “Uroburos: The Snake Rootkit” [1] paper written by Artem Baranov and Deresz and was captivated by the hidden kernel-mode Virtual File System (VFS) functionality implemented within Uroburos. Later, I decided to learn Windows device driver programming and thought replicating this functionality within my own rootkit would be an exciting learning experience. I got busy over the next few years, but recently found time to finish the rootkit and to open-source my proof-of-concept implementation.

I implemented a hidden VFS similar to the one used within Turla’s Uroburos/Snake rootkit. Turla is a sophisticated advanced persistent threat actor that the Estonian Intelligence Service has attributed to the Federal Security Service of the Russian Federation (FSB) [2]. Analysts believe Turla was behind the Agent.BTZ malware that compromised Pentagon systems in 2008 and the 2014 compromise of RUAG, a Swiss Defense and Aerospace company [3].

My initial implementation of the rootkit required modifications to load it onto a compromised system and bypass driver signature enforcement. We are releasing an open source tool, INTRACTABLEGIRAFFE, which is my proof of concept implementation of the Uroburos VFS functionality along with a basic keylogger that writes intercepted keystrokes to the non-volatile/persistent VFS.

Keylogger Design and Implementation

I initially wanted to become more familiar with Windows device driver development before implementing the VFS. To do so, I developed a keylogger that intercepted keystrokes by attaching itself to the device stack associated with the KeyboardClass0 driver [4].The keylogger intercepts keystrokes from KeyboardClass0 by attaching to its device stack and intercepting I/O Request Packets (IRPs) going down the device stack to read keystrokes. We queue an I/O completion routine to the intercepted IRP, so when the underlying keyboard device driver completes the read request we can inspect it to obtain the scancode that the keyboard relayed. One of the key underlying assumptions is that the underlying scan code maps to English language characters. As a result of this assumption this tool will not properly interpret keystrokes collected by users leveraging other languages.

For more coverage of the basic implementation and how this method of obtaining keystrokes works, we suggest the book Rootkits: Subverting the Windows Kernel [5], specifically the section titled “The KLOG Rootkit: A Walk-Through.” Additionally, an article titled Keystroke Monitoring [6] on CodeProject outlines an implementation for another similar keylogger. This component was largely a warm up exercise for me to gain familiarity with Windows device driver development before moving onto the more complex VFS implementation.

Implementing the Virtual File System

Uroburos as a Starting Point

I implemented the VFS by referencing the Uroburos research paper while doing some limited reverse engineering of the rootkit with IDA Pro. The dynamic analysis for this step incorporated WinDbg and Volatility, but I didn’t use it to delve deeply into the toolkit itself. Instead, I read the existing research paper to better understand how advanced persistent threat actors implemented the capability. Then I reimplemented the capability based on the descriptions. Ultimately, this most likely resulted in some discrepancies between the two implementations.

Volatile and Non-Volatile File Systems

Similar to the Uroburos rootkit, the VFS implementation contains both a volatile and a non-volatile file system. The volatile file system exists only in-memory. The non-volatile file system exists as a file system hidden within the existing files. For example, we might create a file within the “C:Windows” directory named “hotfix.dat” which contains an embedded FAT16 file system that the rootkit then loads at startup.

The VFS leverages a worker thread that asynchronously performs read and write operations to the VFS. It also implements IOCTLs to emulate the expected interface of a hard disk drive. Currently, the only supported file system is FAT16; however, the code is structured in a way which makes it quite easy to add new file system formats.

Executing Our Proof-of-Concept

To establish the VFS, we created an emulated hard drive and implementations for both the volatile and non-volatile file system functionality. We did this for the volatile/in-memory VFS by allocating memory within the Windows kernel heap. Setting up the non-volatile/persistent file system involved mapping a file on disk into memory containing the hidden file system. This two-pronged solution avoids much of the complexity associated with using unallocated hard disk space outside the normal Windows file system to store the data for the persistent VFS.

We used the same names as Turla with “Hd1” and “Hd2” representing the persistent and in-memory file systems respectively. We also emulated Turla by leveraging the same “RawDisk1” and “RawDisk2” device names when creating device objects.

Accessing the Virtual Filesystem

You might be asking yourself: “How do I access the VFS without mounting it to a letter drive, as this would be quite obvious to the end-user?” To answer this question, we needed to dig deeper into how the drive-letter mapping mechanism works. We also needed to look into the inner workings of the Windows file paths mechanism. MSDN has a great article discussing the inner workings of files, paths, and namespaces in Windows titled “Naming Files, Paths, and Namespaces” [7].

Win32 Device Namespace

In our proof-of-concept scenario, while we could map the filesystem to a drive letter we knew this would be apparent to the end user. Instead, we can use the Win32 device namespace, which allows direct access to physical disks and volumes without going through the file system. The previously mentioned article from MSDN describes how this functionality works by leveraging the “.” prefix, as follows:

The “.” prefix will access the Win32 device namespace instead of the Win32 file namespace. This is how access to physical disks and volumes is accomplished directly, without going through the file system, if the API supports this type of access. You can access many devices other than disks this way (using the CreateFile and DefineDosDevice functions, for example) [8].

The article then explains that the Win32 Device Namespace resides within the NT namespace within a subfolder called “Global??”. Essentially, we can expose hard disk drive partitions, etc. as device objects and make them externally accessible through the Win32 Device Namespace. Specifically, we would create a symlink between the “Device” subdirectory and the accessible “GLOBAL??” subfolder within the NT namespace containing the Win32 Device Namespace, as follows (emphasis mine):

There are also APIs that allow the use of the NT namespace convention, but the Windows Object Manager makes that unnecessary in most cases. To illustrate, it is useful to browse the Windows namespaces in the system object browser using the Windows Sysinternals WinObj tool. When you run this tool, what you see is the NT namespace beginning at the root, or “”. The subfolder called “Global??” is where the Win32 namespace resides. Named device objects reside in the NT namespace within the “Device” subdirectory. Here you may also find Serial0 and Serial1, the device objects representing the first two COM ports if present on your system. A device object representing a volume would be something like “HarddiskVolume1”, although the numeric suffix may vary. The name “DR0” under subdirectory “Harddisk0” is an example of the device object representing a disk, and so on.

To make these device objects accessible by Windows applications, the device drivers create a symbolic link (symlink) in the Win32 namespace, “Global??”, to their respective device objects. For example, COM0 and COM1 under the “Global??” subdirectory are simply symlinks to Serial0 and Serial1, “C:” is a symlink to HarddiskVolume1, “Physicaldrive0” is a symlink to DR0, and so on. Without a symlink, a specified device “Xxx” will not be available to any Windows application using Win32 namespace conventions as described previously. However, a handle could be opened to that device using any APIs that support the NT namespace absolute path of the format “DeviceXxx”. [8]

Executing Our Proof-of-Concept

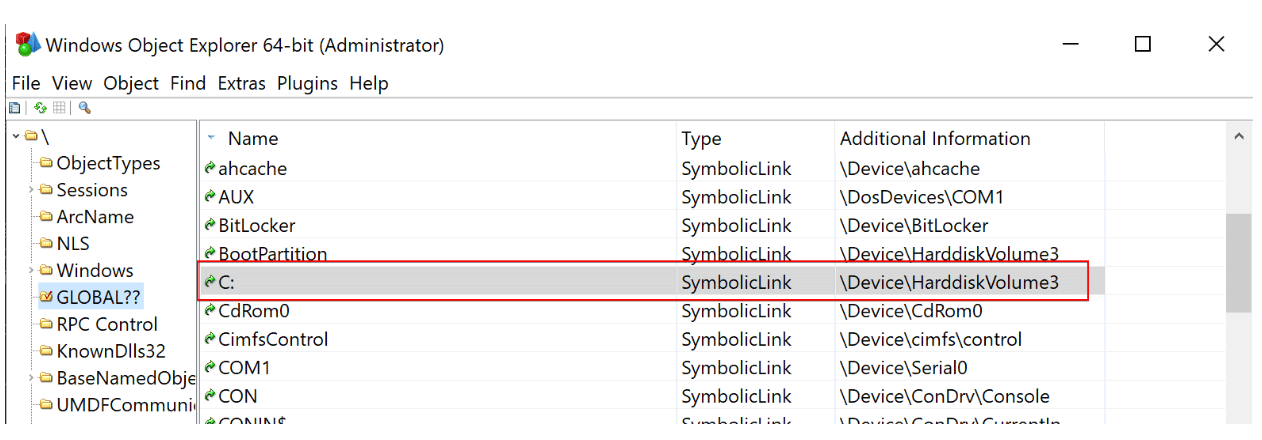

We can examine this mechanism by leveraging the “Windows Object Explorer 64-Bit” (WinObjEx64) utility from hFiref0x. Here we can see a symlink created within the Win32 Device Namespace to allow access to the filesystem on “DeviceHarddiskVolume3” as demonstrated in Figure 1.

Figure 1: Using WinObjEx64 to view information objects within the Win32 Device Namespace

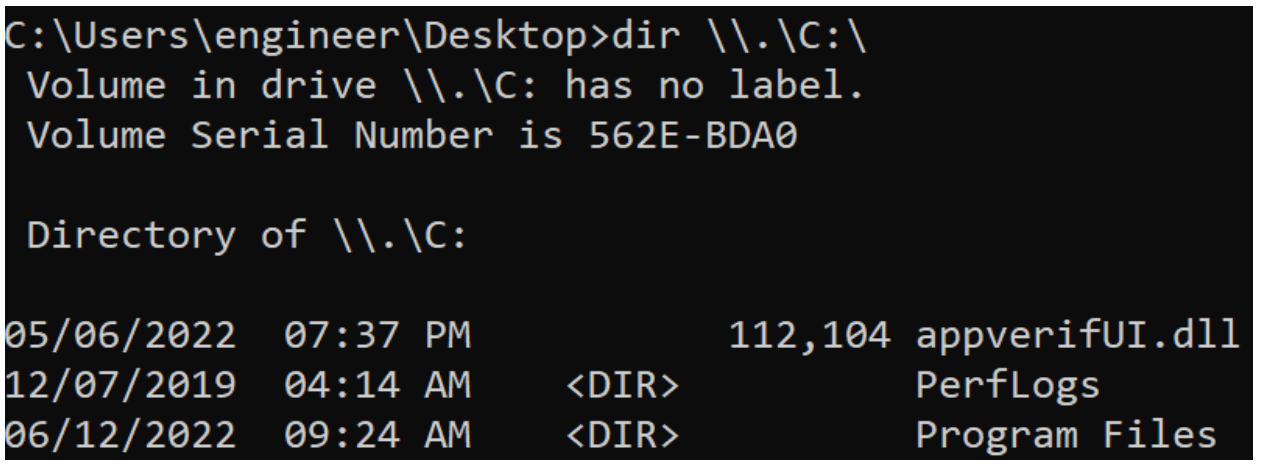

We can access the drive using the “.” notation to access the device through the Win32 Device Namespace as shown in Figure 2:

Figure 2: Listing the contents of the C: driver using the “.” prefix

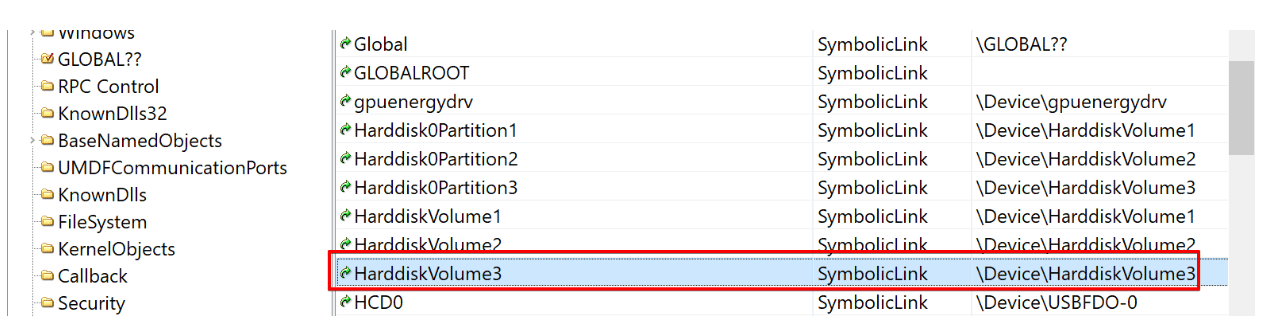

However, we also can see that there is a created symlink named “HarddiskVolume3” that also allows access to the “DeviceHarddiskVolume3” object within the NT namespace (see Figure 3).

Figure 3: Identifying a symlink exposing access to the same volume as the C: drive

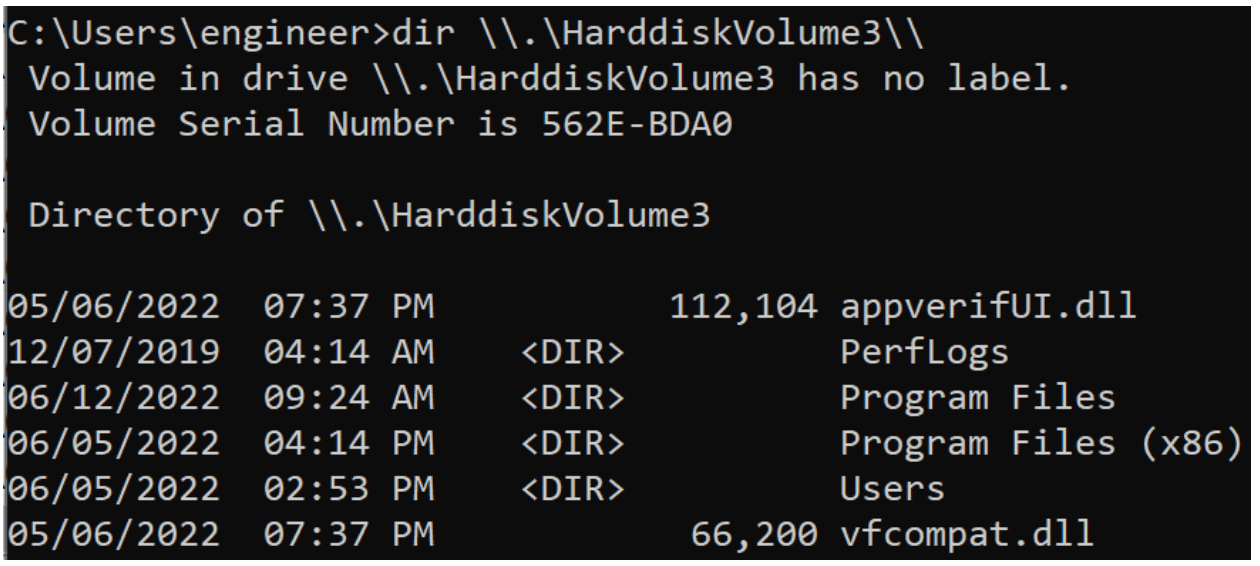

We can use this symlink to access the file system mapped by the “C:” drive (see Figure 4).

Figure 4: Accessing the C: drive using an alternative symlink to the same underlying device

We can facilitate access to our VFS in the same manner by exposing the VFS through the Win32 Device Namespace and leveraging the “.” notation as shown previously. This allows us to access the file system without directly mounting it to a drive letter which could expose us to the end user.

Bypassing Driver Signature Enforcement

One of the primary hurdles in regard to the development of kernel mode rootkits in recent versions of Windows is the introduction of the Driver Signature Enforcement security control. This control restricts the loading of device drivers to those signed with an extended verification (EV) code signing certification from Microsoft.

Assorted Methods

Turla and the ProjectSauron APTs have used the technique of loading a known vulnerable signed driver and subsequently exploiting a known memory corruption vulnerability in that driver to execute Ring0 stager shellcode. They then use this shellcode to load a second stage device driver. This technique is quite useful since it’s easy to deploy and requires neither core system modifications nor a system reboot.

Other methods of bypassing driver signature enforcement include enabling the testing signing mode (this results in a watermark being displayed and certain DRM related features being disabled), using bootkits (e.g. TDL4, Rovnix, etc.), and leveraging stolen code signing certificates from compromised device manufacturers. Many of these techniques are less useful than they used to be. For example, Microsoft now developers to submit all device drivers to Microsoft for quality assurance testing before they load the drivers. This potentially limits the usefulness of a stolen code signing certificate. Additionally, many systems now support SecureBoot, which limits the effectiveness of bootkits. It also has a feature that blocks the loading of known-vulnerable device drivers using a denylist when certain security settings such as Hypervisor-protected code integrity (HVCI) are enabled.

Executing Our Proof-of-Concept

For our use-case we elected to continue emulating Turla and loaded a known-vulnerable signed driver. We then exploited it to run a second stage shellcode, which uses a custom loader to execute a malicious device driver in memory. We accomplished this by leveraging the Turla Driver Loader (TDL) tool from hFiref0x which loads a vulnerable VirtualBox device driver and exploits it to run kernel mode shellcode [9].

Implementing Compatibility with Turla Driver Loader

TDL uses a custom portable executable (PE) loader that can cause compatibility issues with mitigation technologies such as Spectre mitigations, stack canaries, or control flow guard. Additionally, the loader does not invoke the DriverEntry function with a valid pointer to a driver object, so we had to make some modifications to account for this. Furthermore, on newer versions of Windows, the loader invokes the DriverEntry function with an IRQL of DISPATCH_LEVEL [9], which requires some special workarounds.

Modifying Compiler and Linker Flags

For these reasons, in order for our device driver to load correctly with the TDL loader, we needed to make minor modifications to our code and the application’s compiler flags. First, we needed to modify compiler and linker flags because specific security controls will insert code into the application to perform security checks. These controls include compiler-level security controls such as control flow guard, SafeSEH, and stack canaries.

Often, this can result in the insertion of code that requires particular actions by the linker or otherwise is not compatible with a minimalistic custom PE loader. Generally, my approach is to disable as many of these types of optimizations or other compiler or linker flags that could potentially cause problems. This is largely because we don’t care about having the most optimization or smallest code. On the contrary,compatibility with exploit mitigation technologies isn’t a major requirement for red team tooling.

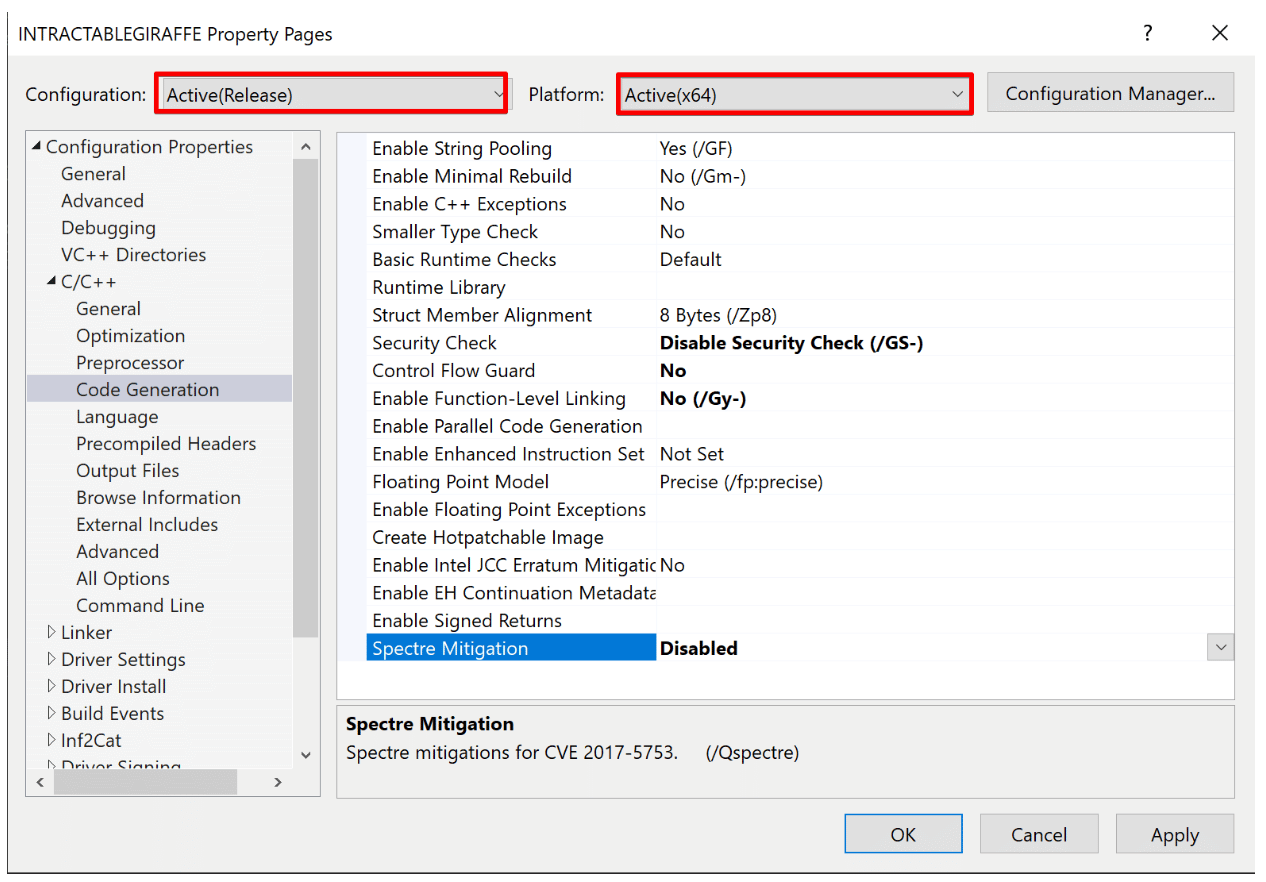

Figure 5 shows the security controls that we disabled when modifying the project settings. We also had to compile the project in “Release” mode when we wished to load the rootkit using TDL. Compiling in the alternative “Debug” mode appeared to break compatibility with the TDL PE loader.

Figure 5: Modifying compiler flags for compatibility with TDL

Modifying the Driver Entry Point

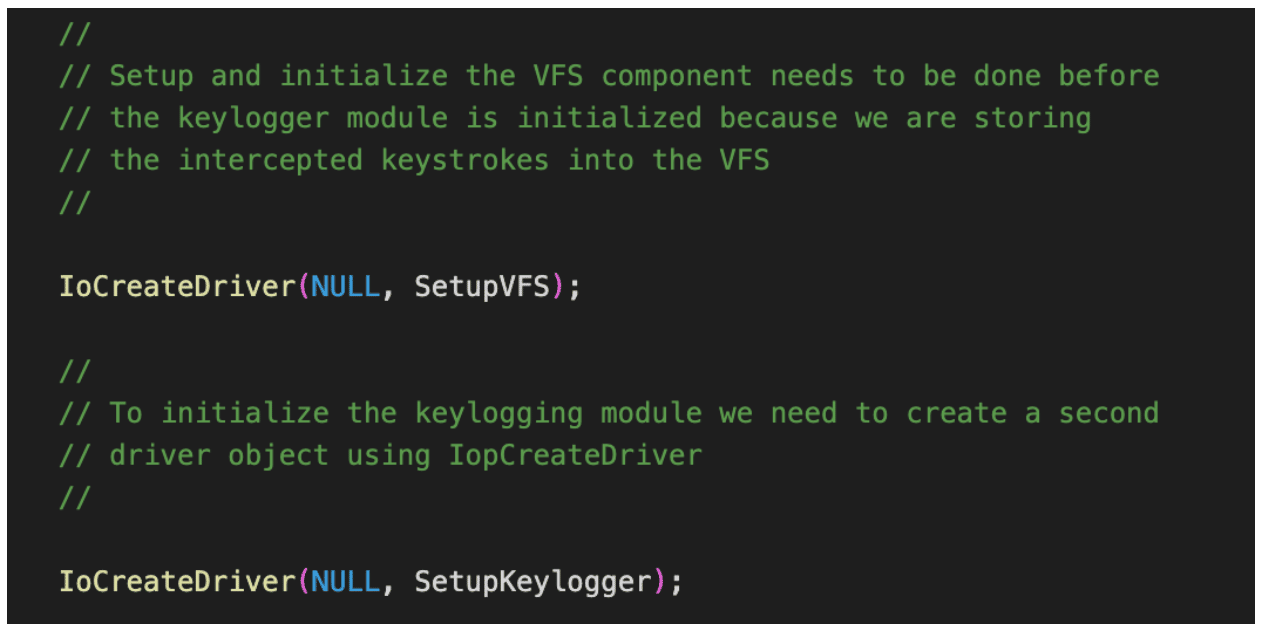

After modifying the compiler and linker flags, we needed to make some modifications to the DriverEntry (essentially the main function of a device driver) to support compatibility with the Turla Driver Loader (TDL) utility. First, the DriverEntry function when invoked by TDL did not receive a valid driver object. Instead, we needed to use the function IoCreateDriver to invoke the SetupVFS and SetupKeylogger functions with a valid driver object, as demonstrated in Figure 6.

Figure 6: Invoking IoCreateDriver to maintain compatibility with TDL

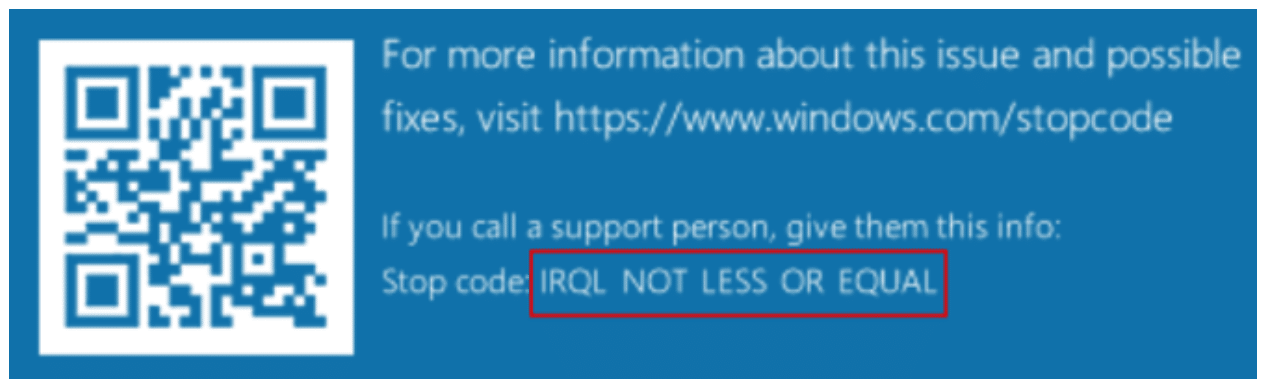

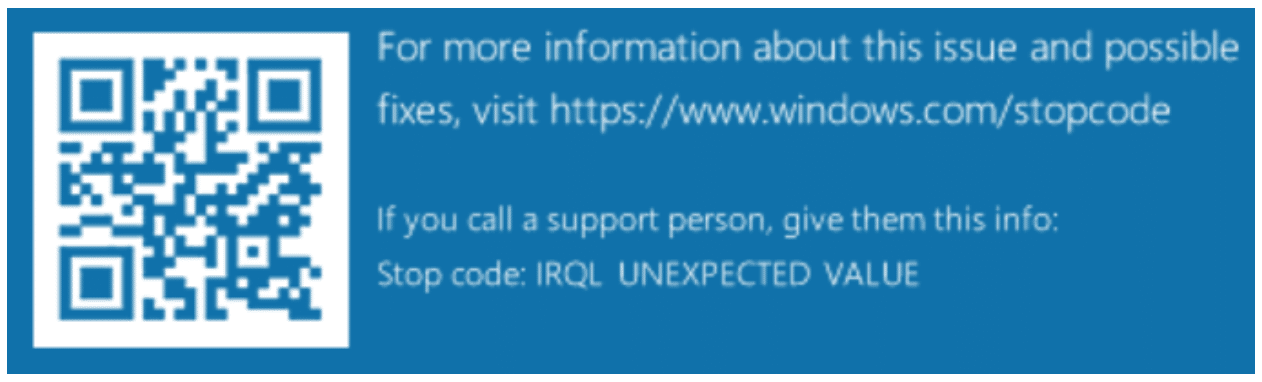

At first, I believed this was the only modification required for compatibility with TDL. Unfortunately, after making this modification and attempting to load the driver I was greeted with a blue screen of death (BSOD) indicating that an error “IRQL NOT LESS OR EQUAL” had occurred (see Figure 7).

Figure 7: The “IRQL NOT LESS OR EQUAL” message displayed

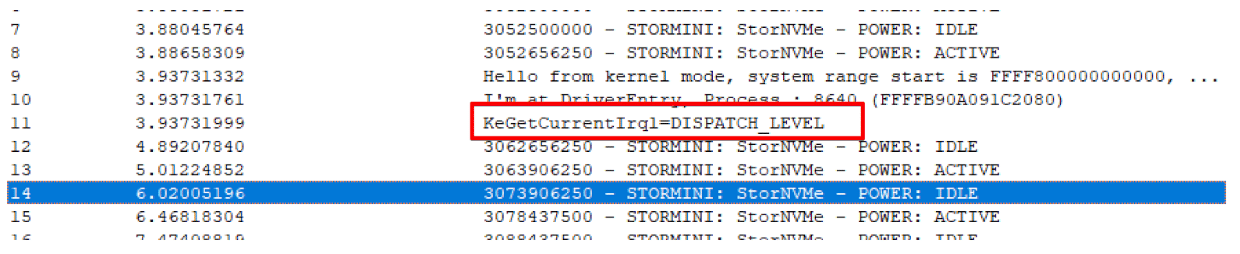

Quickly I noted on the TDL README that on newer versions of Windows 10 greater than RS2 the shellcode invokes the DriverEntry function at DISPATCH_LEVEL. Unfortunately for our purposes, the original developers wrote the DriverEntry logic under the assumption that it would always be invoked at PASSIVE_LEVEL. Figure 8 shows a debugging statement indicating that DriverEntry was invoked at a higher IRQL than expected:

Figure 8: A debug statement indicating that the DriverEntry function was invoked at DISPATCH_LEVEL

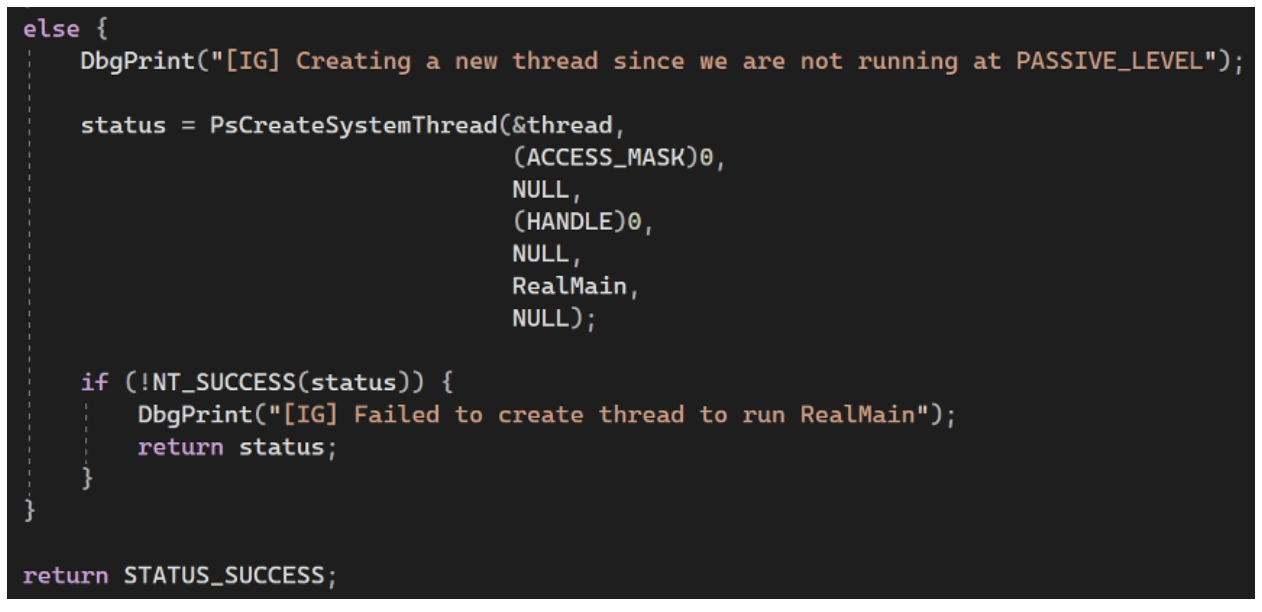

I initially thought invoking the “PsCreateSystemThread” function would be a sufficient workaround. In this scenario, my plan was to invoke the “RealMain” function by creating a new thread that executed at a lower IRQL. The code I leveraged for this attempt appears in figure 9:

Figure 9: An initial attempt to lower the IRQL by creating a new system thread

Unfortunately, I had forgotten that PsCreateSystemThread can only be invoked with an IRQL of PASSIVE_LEVEL and was greeted with another BSOD, as seen in figure 10.

Figure 10: The error message generated when attempting to create a system thread at an IRQL of DISPATCH_LEVEL

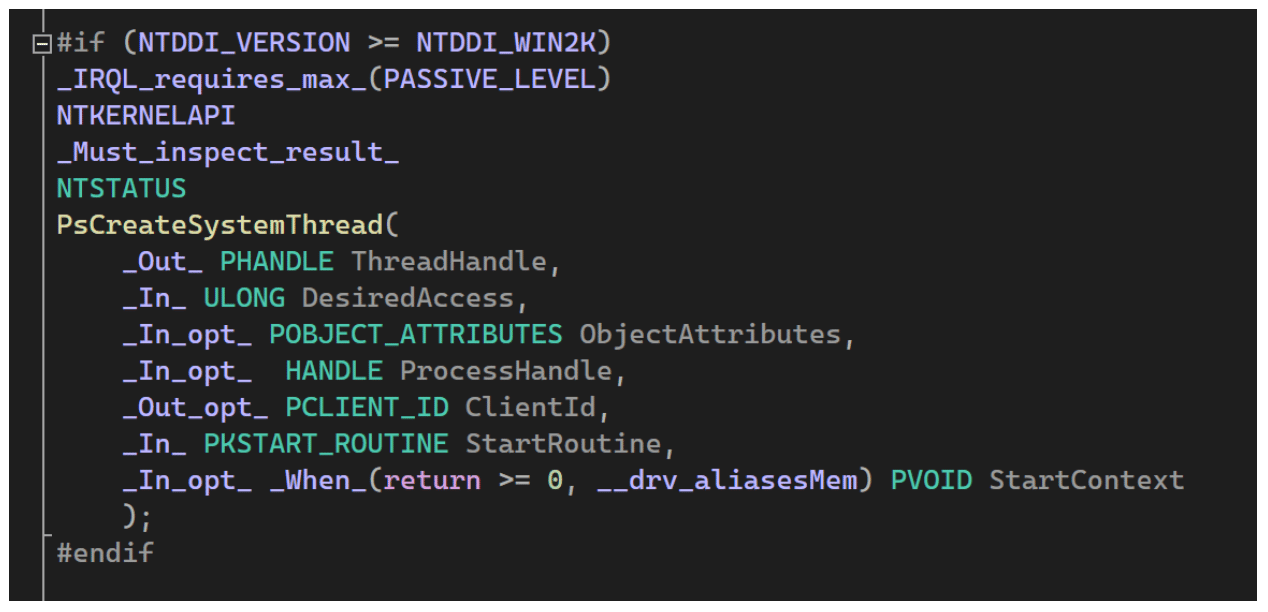

Digging further into PsCreateSystemThread I found the definition within a header file with the “_IRQL_requires_max_(PASSIVE_LEVEL)” directive confirming the function could only be invoked at PASSIVE_LEVEL (see figure 11).

Figure 11: The header file indicating the PsCreateSystemThread function can only be invoked at an IRQL of PASSIVE_LEVEL

Next, I began trying to think of methods I could leverage to invoke my code at a lower IRQL. I initially thought I could queue a kernel mode APC to defer execution of my main function until it could be executed asynchronously at a lower IRQL. While I do believe this would have worked, my coworker, Nathan Tucker, suggested a better solution. He mentioned that we could use the IoQueueWorkItem [10] function to queue a work item that would execute asynchronously at an IRQL of PASSIVE_LEVEL. Figure 12 shows the code that accomplished this.

Figure 12: A second attempt that invokes IoQueueWorkItem to execute code at a lower IRQL

After making this modification I was able to successfully invoke my primary initialization code with an IRQL of PASSIVE_LEVEL. This in turn allowed for the completion of required operations related to creating system threads and reading/writing to files on the filesystem (these operations are not possible at an IRQL of DISPATCH_LEVEL).

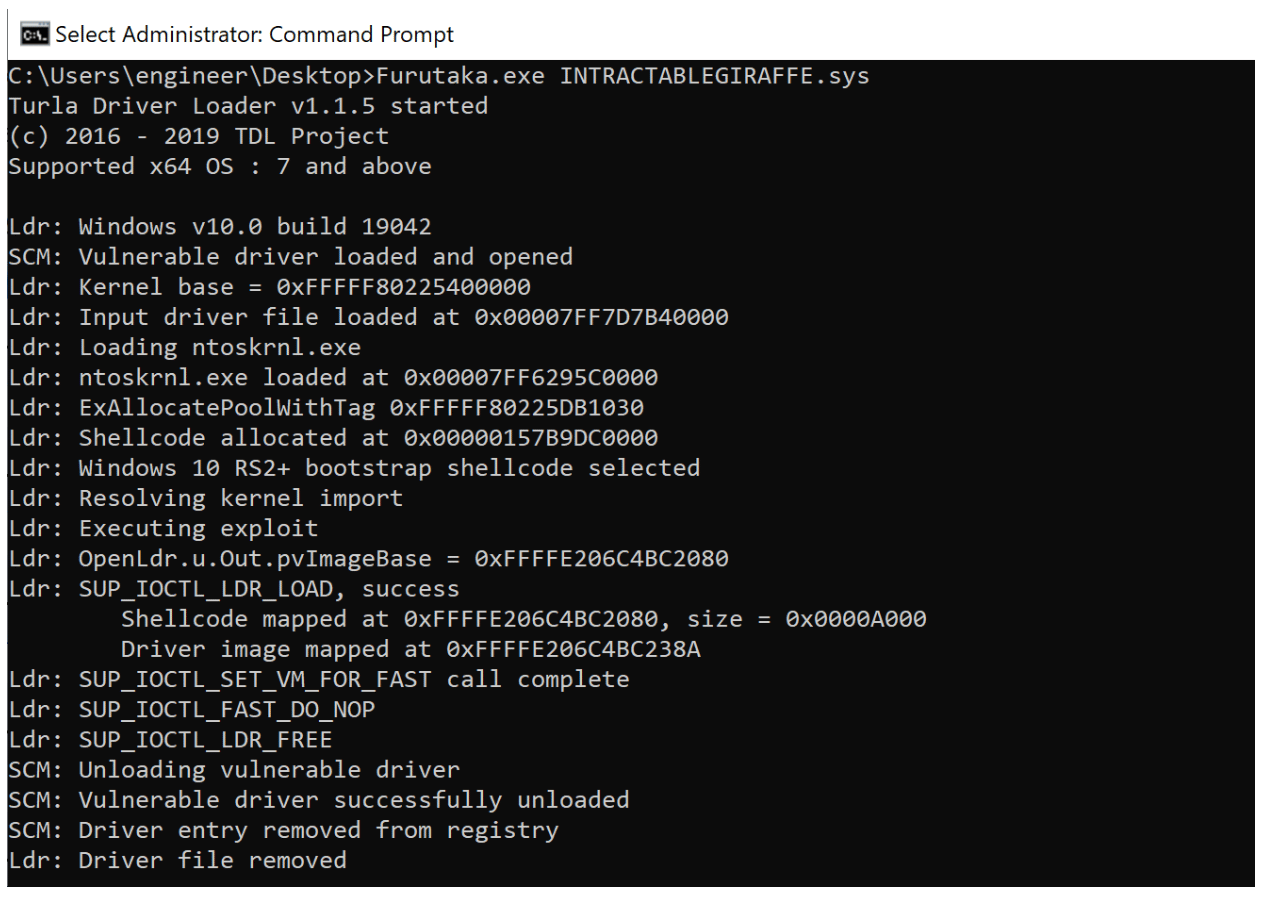

In Figure 13 we invoke the “Furutaka.exe” utility included with TDL to load our rootkit into the system. We can see in this image that the tool loads a vulnerable device driver, exploits it to run shellcode, and then leverages a custom PE loader to map our device driver into memory, perform relocations, resolve addresses within the export address table, etc.

Figure 13: Loading the rootkit using the TDL driver loader utility named “Furutaka.exe”

This was the final necessary modification that enabled us to successfully load our device driver with the TDL loader. We could successfully interact with the rootkit thereafter.

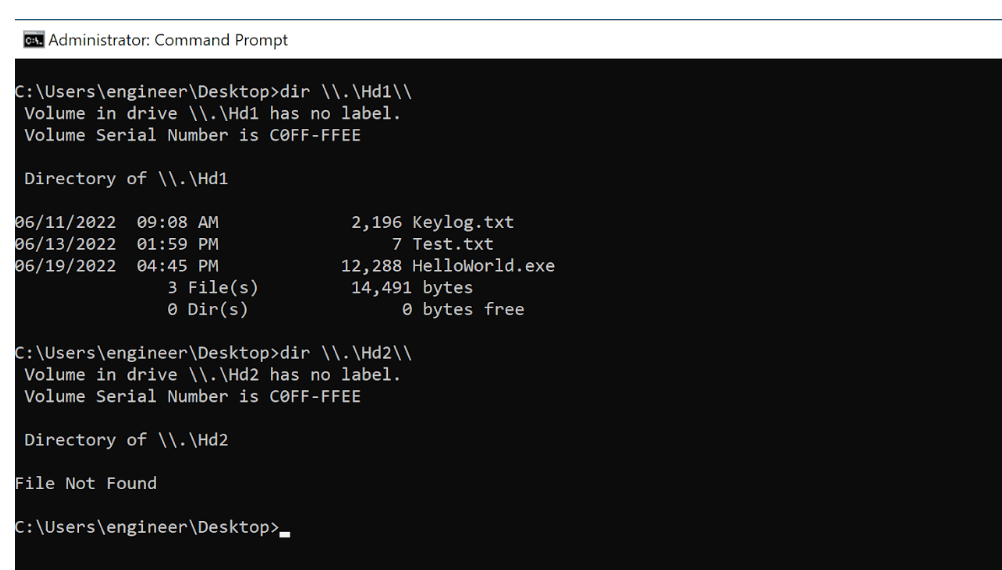

Demoing the VFS and Keylogger Functionality

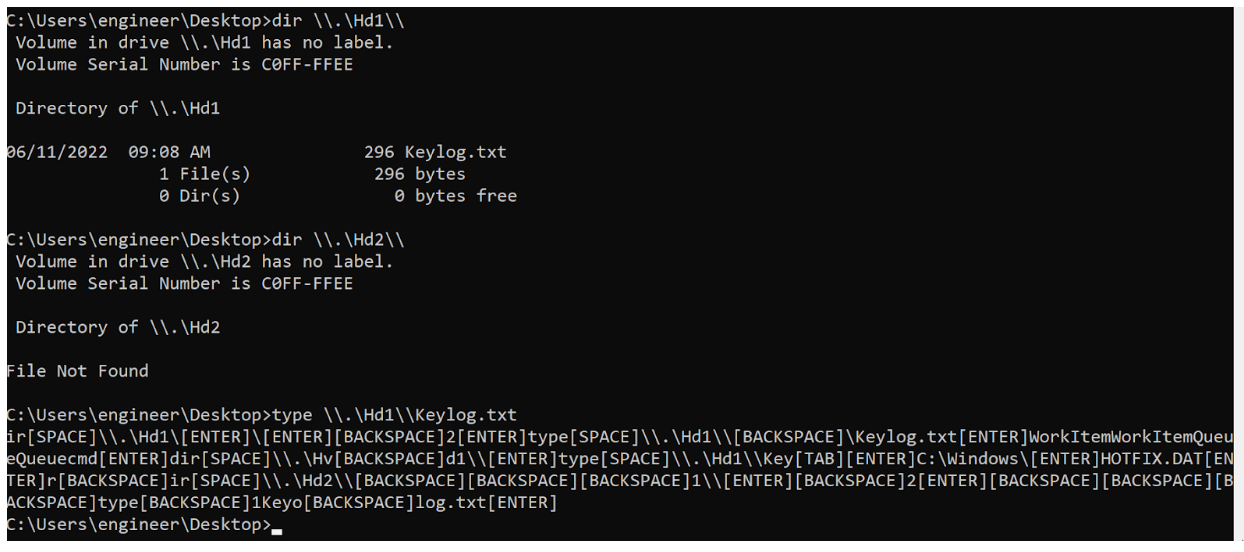

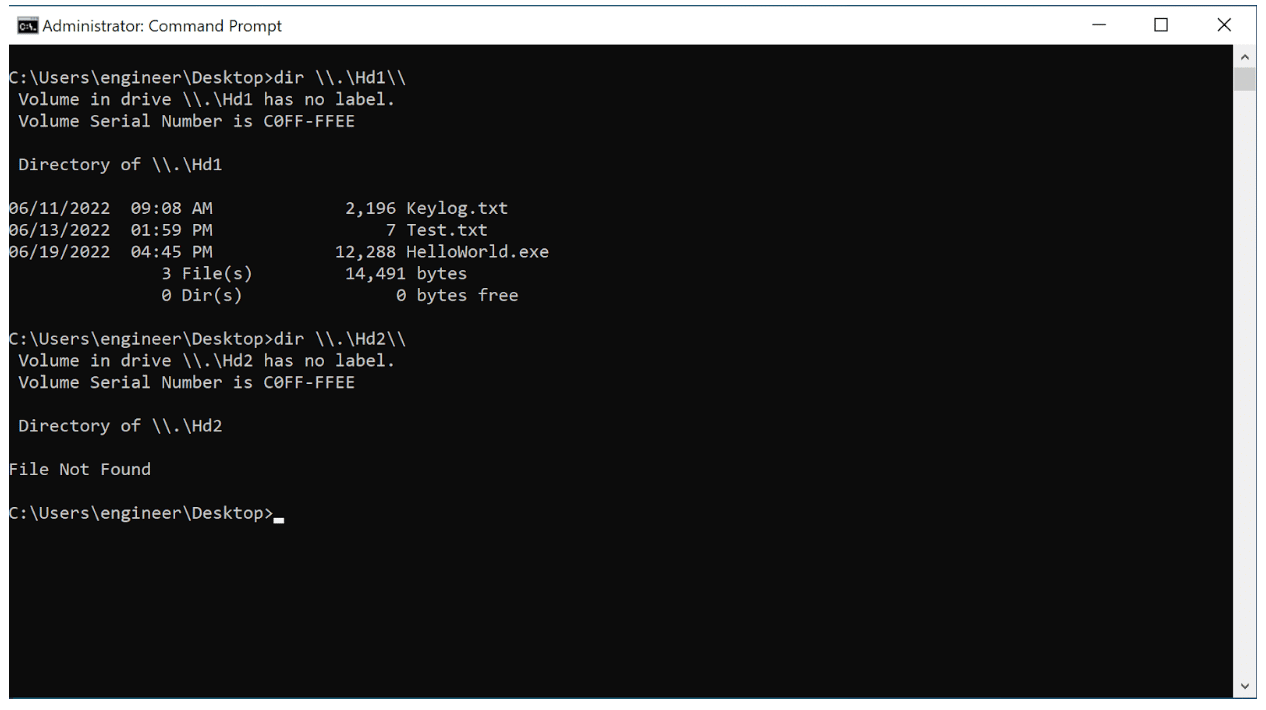

In this section, we walk through a quick demo of the VFS and keylogger functionality. First, we begin by running the command “dir .Hd1” and “dir .Hd2” to list the contents of the non-volatile and volatile file systems. The keylogger configuration defaults to writing to “.Hd1Keylog.txt”, which contains a log of all keystrokes entered by the user (including backspaces, etc.). In order to read the contents of the “Keylog.txt” file, we run the command “type .Hd1keylog.txt”, which presents the contents of this file (see Figure 14).

Figure 14: Listing the contents of the non-volatile and volatile virtual file systems

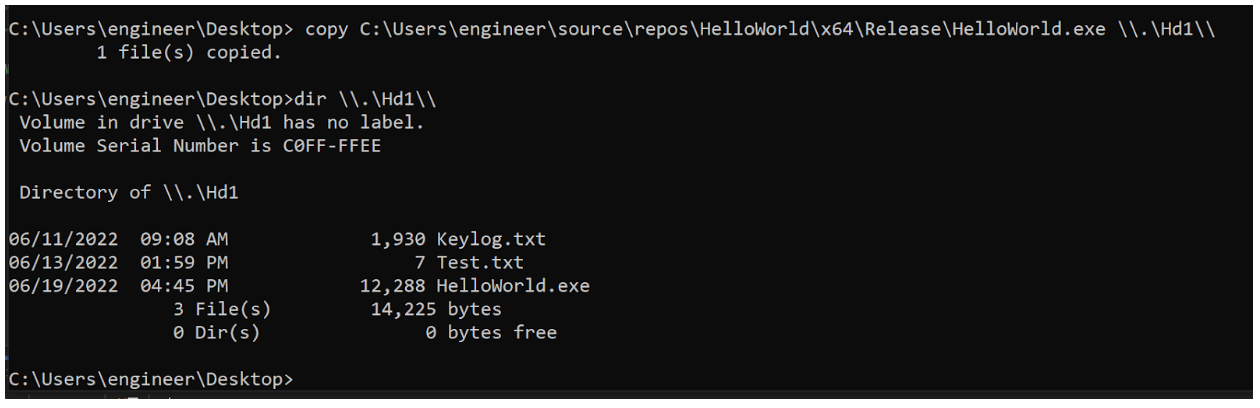

To demonstrate storing a file to the non-volatile VFS we copy a binary named “HelloWorld.exe” into the volume, as seen in Figure 15.

Figure 15: Copying a Hello World binary to the non-volatile file system

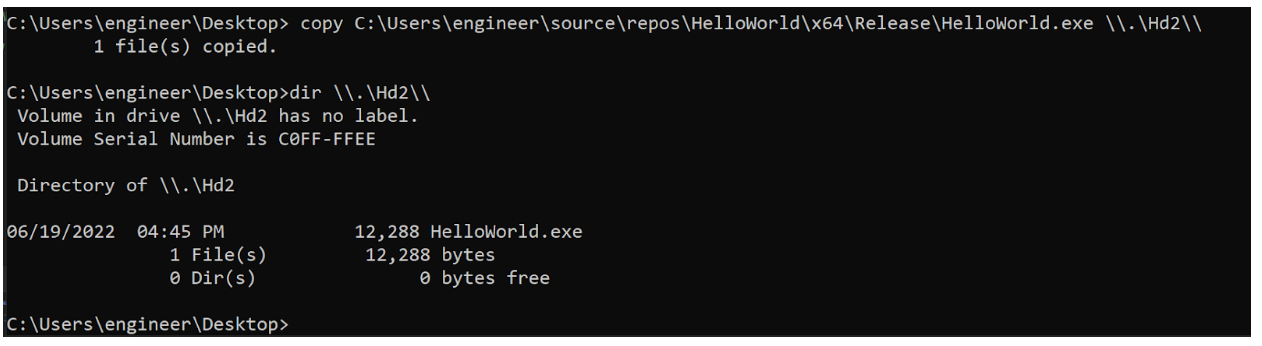

We perform the same action targeting the volatile VFS, as shown in Figure 16:

Figure 16: Copying a Hello World binary to the volatile file system

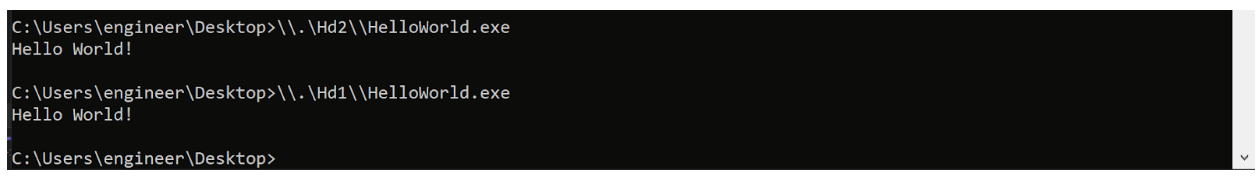

In Figure 17 we then execute both binaries to demonstrate this functionality as well.

Figure 17: Demonstrating execution of the Hello World binary within both virtual file systems

Next, we reboot the system and reload the rootkit using Furutaka.exe (not shown). Then we list the contents of the non-volatile and volatile file systems. In Figure 18, we observe that the non-volatile/persistent VFS still contains the file “HelloWorld.exe”, but the volatile/in-memory VFS is empty as expected.

Figure 18: Listing the contents of the volatile and non-volatile file systems after rebooting the system and reloading the rootkit

Policy on Releasing Offensive Tooling

Generally, when releasing offensive security tooling, we try to mitigate any potential adverse impact. In this case, we weren’t concerned about publishing this tool for a few reasons. While the tool is helpful, it doesn’t give the attacker any additional privileges or access within a target environment. It also requires administrative access to install, and it intentionally does not implement key evasion features such as encryption.

Additionally, the utility is very similar to TrueCrypt or other tools in that it simply supports mounting a filesystem from what is effectively a file on disk or an in-memory ramdisk. Fundamentally, the tool doesn’t exploit anything. Rather, its primary purpose is evasion of certain forensic indicators and emulation of a specific threat actor’s capabilities. Finally, similar open source keyloggers already exist in the public domain, so we are not releasing any entirely new capability for the keylogger component.

Operational Considerations

We have numerous examples of nation state actors and advanced persistent threat (APT) groups utilizing kernel-mode capabilities such as rootkits. Unfortunately, kernel-mode malware remains a comparatively under-explored area by red teams.

The lack of red team usage examples may stem from a purely practical reason. When developing any capabilities that perform core system modifications, load a device driver, or inject into a core Windows system process, we must take extreme care to avoid adversely impacting the client’s system. Our red teams generally have hesitated to deploy kernel-mode capabilities within a client’s production environment because of the risk that the driver could crash or otherwise impair a production system.

Often, we simply avoid deploying that capability if the system is critical in nature and instead only leverage it on less critical, non-core, non-production systems. We also can deploy a less sophisticated capability to avoid making core system modifications. This highlights the trade-off in effectiveness and operational security. While the more sophisticated technique seems better for evasion, at least at face value, a system crash or malfunction is a great way to trigger an investigation.

Future Work

One potential future improvement might be implementing IOCTLs to support mounting and unmounting hidden filesystems. Currently, the source code of the application essentially hard codes the configuration of the rootkit. Modifying the driver to support IOCTLs would allow the dynamic creation of hidden filesystems.

We also should incorporate expanded support for additional file systems beyond FAT16. The FAT16 file system imposes some limitations on file size which hinder the operational effectiveness of the tool.

Encryption is another key feature required to fully operationalize the tool. Ideally, both the in-memory and persistent file systems should remain encrypted whether on-disk or in–memory. Data decryption should only occur when the device driver receives and validates a request.

We also could implement an IOCTL interface that allows for the dynamic creation of hidden VFSs. This could include adding functionality that removes callbacks configured by EDRs, effectively blinding or disabling certain detection capabilities. Additionally, we could add capabilities to support reading and writing to LSASS process memory. This would enable us to bypass the “RunAsPPL” control meant to protect the reading and writing of LSASS process memory.

From an anti-reverse engineering perspective, we could define a preprocessor macro for all the debugging print statements in the codebase. This preprocessor macro could then remove any debugging print statements that might be included within the compiled binary. For production malware, this helps to hinder analysis while still supporting local debugging during development. Additionally, we’ve previously released some LLVM compiler plugins to support dynamic junk code insertion and automatic encryption of strings within compiled binaries. We could develop these and additional LLVM plugins to further obfuscate the compiled driver and hinder analysis.

Conclusion

Reimplementing functionality from an advanced persistent threat actor’s toolkit is a great way to gain additional perspective into their methodology, capabilities, and mindset. From both a purple team and red team perspective, I think there is tremendous value in reverse-engineering the capabilities leveraged by real-world threat actors and implementing their tools and techniques in a way that closely mirrors their actual abilities. It is an effective way to learn malware development.

When combined with threat intelligence reports, such as the RUAG case study, this type of exercise provides valuable information for red teams seeking to emulate the techniques utilized by modern threat actors in order to enhance the realism of their engagements. Unfortunately, most red teams have a limited research and development budget that would not permit them to fully replicate a complex toolkit such as Uroburos. Reimplementing just the VFS capability itself took quite a bit of time. However, I do think there is a middle-ground where red teams unable to fully emulate and replicate a complex implant could emulate other aspects such as the delivery mechanism or dropper, implement a subset of the functionality of the toolkit, or leverage similar command and control mechanisms, phishing techniques, etc. that are easier to replicate.

References

[1]https://artemonsecurity.com/uroburos.pdf

[2]https://www.govcert.ch/downloads/whitepapers/Report_Ruag-Espionage-Case.pdf

[4]https://docs.microsoft.com/en-us/windows-hardware/drivers/hid/keyboard-and-mouse-class-drivers

[5]https://www.oreilly.com/library/view/rootkits-subverting-the/0321294319/

[6]https://www.codeproject.com/Articles/43144/Keystroke-Monitoring

[7]https://docs.microsoft.com/en-us/dotnet/standard/io/file-path-formats

[8]https://docs.microsoft.com/en-us/windows/win32/fileio/naming-a-file?redirectedfrom=MSDN#paths

[9]https://github.com/hfiref0x/TDL

[10]https://www.matteomalvica.com/blog/2021/03/10/practical-re-win-solutions-ch3-work-items