Hello everyone! I first want to introduce myself, my name is Anthony Marquez and I am the newest member of Praetorian’s technical team.

I’m excited to join a group of such bright individuals and work with a company that values the promotion of thought leadership and realizes the importance of allowing their employees to take on interesting side projects. I hope to be contributing to several blog posts in the future. For my first post I wanted to talk about one of the first side projects that I recently completed here at Praetorian —ROTA.

TECH PUZZLES BACKGROUND

In an effort to attract and distinguish the top talent in the industry, we created a series of Tech Puzzles for all those interested in a challenge. We believe the tech puzzles provide a means of testing an applicant’s problem solving skills and their desire to take on tough problems. After a bit of research and some brainstorming, we decided that the next tech puzzle to implement would be the ROTA challenge. ROTA can simply be described as an ancient Roman version of tic-tac-toe (check out the ROTA challenge page for more information).

I found the challenge of creating such a game to be exciting and a great side project that would introduce several new topics to me. Coming from an engineering background, I did not have traditional computer science training because most of my academic work was focused on hardware. However, I love learning and knew this project would expose me to new topics and hopefully result in a fun game for everyone to play.

My first iteration of the game was developed utilizing a Finite State Machine (FSM) type architecture. A FSM type architecture attempts to create an exhausted list of game states and then determine the optimum move for the computer player to make. The problem with this approach is that the process of drawing out state diagrams quickly becomes increasingly complex and makes it difficult to update the code if future algorithms were to be implemented. Overall, I knew there had to be a simpler way to program such a game, and it was probably something that computer science majors learn in their first year of classes. So with some searching on Google I stumbled across the Minimax Algorithm, a fundamental AI algorithm. Many games like checkers, othello, chess, and tic-tac-toe are implemented using the Minimax Algorithm as they are games of full/perfect information (meaning it is possible to see all future moves). Since the game of ROTA is often compared to tic-tac-toe, I realized that this would be the perfect algorithm for my next implementation of it.

MINIMAX AND OPTIMIZATIONS

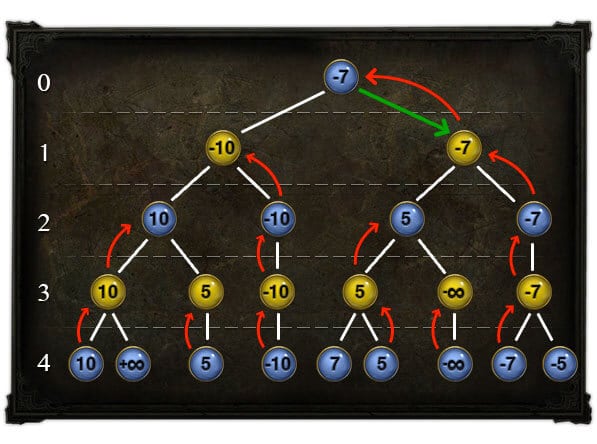

I found the whole topic of the Minimax Algorithm to be quite interesting. There are several books and other resources available on the topic and I recommend looking at them when you get a chance. The concept for the Minimax Algorithm begins with defining a scoring system for action outcomes and states of a game. For example, in the game of tic-tac-toe if the game is scored with respect to the computer player: a win would mean a positive score, a loss would mean a negative score, and draw would be a neutral (zero) score. By having a defined score, each set of moves and the resulting outcome can be compared to one another. The possible outcomes for the game are often represented by a search tree. An example of one is shown below:

In the search tree the blue nodes are moves of the maximizing player (the computer in our case) and the yellow nodes represent the moves of the minimizing player (the opponent). The branches that connect each parent node to its children represent the possible choices for each player. For a game like ROTA or chess, where an endless draw (a.k.a. stalemate) is possible, a decision path may have infinite number of levels often requiring a maximum depth (number of levels in the tree) to be often specified. This is done to prevent the algorithm from continuing down the tree, resulting in an infinite recursive loop (and likely crash due to insufficient memory). The nodes at the bottom of the search tree are called the leaf nodes, they contain the score for the state of the game after a decision path has been taken (all the corresponding moves have made). To determine which move should be made by the maximizing player at the root node, we start at the leaf nodes and move up one level to the parent nodes. If the parent node is a maximizing node, the value of the child node with the maximum value is assigned to parent node. If the parent node is a minimizing node, the value of the child node with the minimum value is assigned to parent node. The algorithm continues this process until it reaches the root node. The final value determines the move a player should make in order to minimize the maximum possible loss. The logic above is translated into the following psuedocode:

Psuedocode:

function minimax(node, depth, Player)if depth = 0 or leaf(node)return evaluate(node)if Player = MaxPlayerval := infinityfor each child of nodetempVal := max(α,minimax(child, depth-1, not(Player) ))if tempVal > val, val:= tempValreturn valelseval := -infinityfor each child of nodetempVal := max(α,minimax(child, depth-1, not(Player) ))if tempVal < val, val:= tempValreturn val(* Initial call *)minimax(origin, MaxDepth, MaxPlayer)

The size of a search tree generated by a game like ROTA can be quite large due to the possibility of endless draws and a maximum depth is often specified to mediate this; however traversing an entire ROTA search tree can still be an expensive process as each parent node can contain several children nodes (branching factor) thus quickly increasing the total number of nodes in the tree. The more time it takes to generate and traverse the search tree, the longer the response time from the computer between moves which leads to a poor user experience.

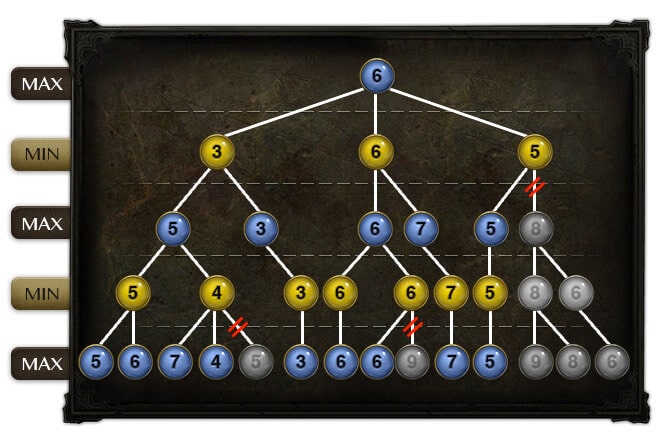

To further optimize the algorithm, alpha-beta cutoffs are often implemented in a Minimax Algorithm (the process is called alpha-beta pruning). Alpha-beta cutoffs are used to reduce the search tree size by reducing the number of branches in the tree that need to be evaluated. It does this by adding two more variables to the function call which make it easier to track the score for the best move in the current level of the search tree being examined. If the next branch/move being evaluated results in an equivalent or worse score, and will not influence the final decision, the algorithm stops evaluating the branch and all the possible subtrees. The figure below shows alpha-beta pruning in effect where entire subtrees are ignored (in this example moves are being evaluated from left to right).

The resultant psuedocode looks as follows:

function alphabeta(node, depth, α, β, Player)if depth = 0 or leaf(node)return evaluate(node)if Player = MaxPlayerfor each child of nodeα := max(α, alphabeta(child, depth-1, α, β, not(Player) ))if β ≤ αbreak (* Beta cut-off *)return αelsefor each child of nodeβ := min(β, alphabeta(child, depth-1, α, β, not(Player) ))if β ≤ αbreak (* Alpha cut-off *)return β(* Initial call *)alphabeta(origin, depth, -infinity, +infinity, MaxPlayer)

Pro Tip: Adding alpha-beta cutoffs into the Minimax Algorithm, will result in a noticeable decrease in processing time coupled with the ability to specify a higher maximum depth. This increases the likelihood that your algorithm is truly “unbeatable”.

BRINGING IT ALL TOGETHER

As I began coding up ROTA, I knew I was going to create two versions of the game. The first version would include a user interface that could be played manually and the other was a web service. I decided to start with the user interface version first since having a front-end component would make it much easier to debug the code, as I would be able to see the game moves as they occurred.

Creating the user interface portion of the game was a strait forward task, and soon enough I had completed a working version of the game. I then decided to translate my client-side Javascript code to a server-side coding language.

Finally, after some internal feedback, we decided to develop multiple difficulty levels for the user interface version of the game. It would allow human players to learn the nuances of the game while playing the game on “Easy” mode and avoid becoming frustrated with losing to an “unbeatable” opponent. This was the last feature added to the game before we released the game to a select number of beta testers.

Overall, it was fun experience. Once I got everything working, I got that utter feeling of accomplishment that makes coding all the worthwhile. Even after the initial release I continue to look for ways to refine the game and improve the overall experience. I have a few updates in mind and plan to roll out new changes gradually.

We hope this new challenge proves to be one worthy of your time and we appreciate any feedback you could provide us in the comment section below. Good luck!