The capabilities of modern AI models have advanced far beyond what most people in the security industry have fully internalized. AI-generated phishing, script writing, and basic offensive automation are getting plenty of attention, but what happens when you apply agentic AI to the full lifecycle of building, testing, and refining custom malware and command-and-control (C2) infrastructure against real defenses?

At Praetorian, we’ve embraced AI-driven offensive security workflows that would have been impossible even a few months ago. What we’ve learned from building and operating these systems has entirely reshaped how we think about the offense-defense balance.

Build, Test, Evade, Repeat

Consider what it takes to build custom malware or C2 agents from scratch. Traditionally, that’s a weeks-long project requiring deep systems programming expertise. You’re building software that can communicate covertly with an attacker, execute commands on a compromised machine, avoid detection by security products, and persist through reboots and updates. Then comes the hardest part: testing it against real endpoint detection products, understanding why it gets caught, and iterating until it doesn’t. With agentic AI workflows, we’ve compressed that entire process from weeks into days. The results are fully functional, production-grade offensive tooling with dozens of operational commands, multiple communication channels, and evasion techniques specifically engineered to bypass real endpoint security products.

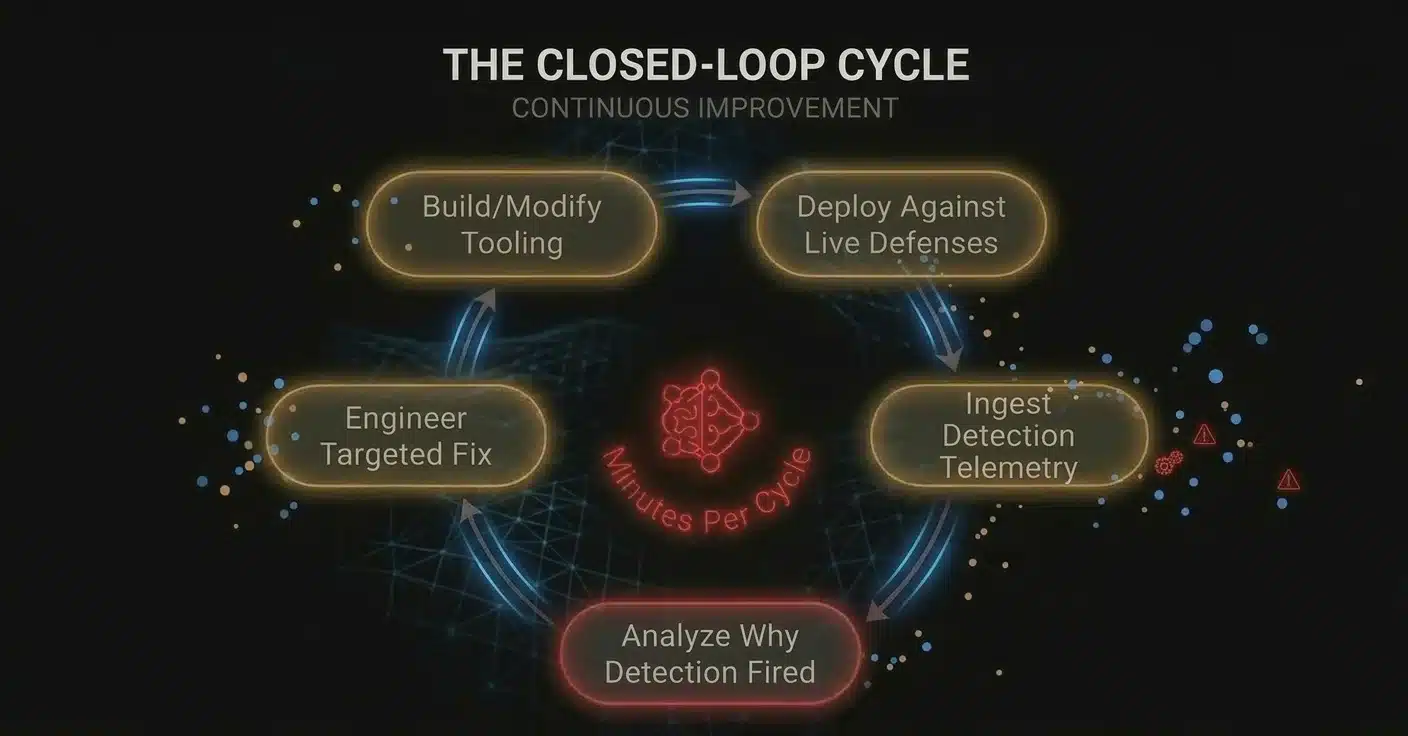

With these workflows, an operator simply points the AI agents at a target detection stack and tells them to iterate until the tooling evades. The agents deploy against production-grade endpoint defenses in a controlled environment and ingest the resulting detection telemetry. This isn’t a binary pass/fail. Modern EDR products produce rich, structured alert data: the specific detection rule that fired, the MITRE ATT&CK technique it mapped to, the confidence score from ML classifiers, the behavioral indicators that contributed to the alert, and in many cases the exact features of the binary or process behavior that triggered the detection.

From Hypothetical to Operational

The AI agents parse all of that, identify the specific aspect of the tooling that caused the alert, and produce a new variant that addresses the detection mechanism directly. Not randomly. Not by trying hundreds of mutations and seeing what sticks. By reasoning about the detection logic and engineering a targeted fix.

Then it does it again. And again. Each cycle takes minutes, not days. Whether the detection is a static signature, an ML classifier, or a behavioral rule, AI reasons about the specific mechanism and engineers the appropriate fix.

AI-driven offensive security isn’t hypothetical. In the media, reports of organizations compromised [1] [2] by basic AI-generated tools [3] are becoming routine. But agentic workflows enable something far more significant: custom malware and C2 infrastructure tailor-made for a specific organization’s environment and defenses. Bespoke offensive tooling is no longer expensive or time-consuming to produce.

What This Means for Detection

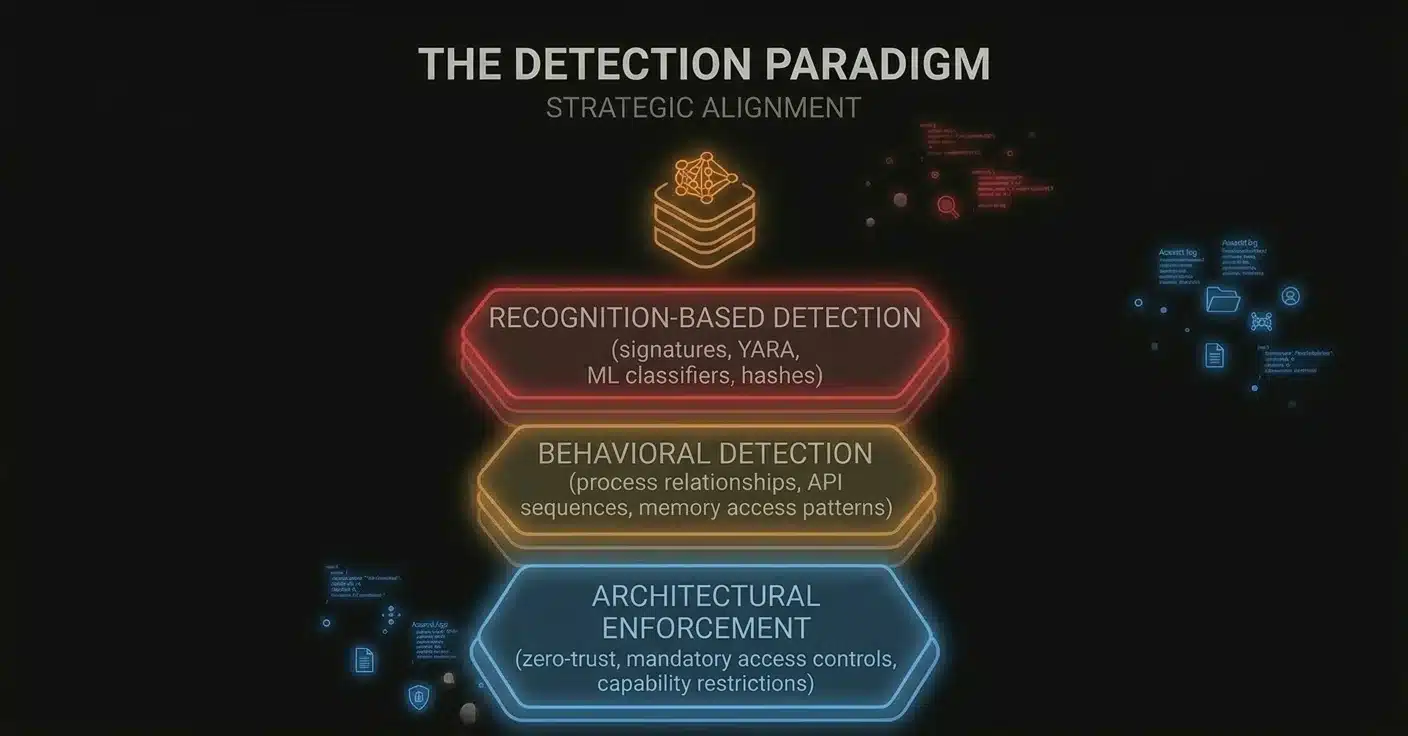

As AI-driven offensive security compresses retooling timelines, most endpoint security products are still built on recognition: static signatures, YARA rules, ML classifiers, and file reputation systems. They’re all asking some version of the same question: does this look like something we’ve seen before?

That assumption worked because retooling was expensive. Once building custom malware only takes days, and regenerating an agent variant takes an afternoon, that assumption breaks down. A detection engineer spends days crafting a new rule. An attacker feeds it back into the loop, and hours later, the implant looks completely different but works the same. At that cost, attackers can afford bespoke variants for every target.

This doesn’t make signature-based detection useless overnight. It still catches unsophisticated threats, and defense-in-depth still matters. But if recognition is the primary load-bearing wall of your detection strategy, the ground underneath it shifts fast.

The Limits of Behavioral Detection

Behavioral detection is harder to evade than signatures, but it’s not immune either. Behavioral rules monitor process relationships, track how memory is accessed, and flag anomalous sequences of system interactions. That’s a higher bar to clear than swapping byte patterns. But these same feedback loops apply to behavioral detections too. When AI can observe that a specific memory allocation technique or process interaction triggered a behavioral rule, it can research and apply alternative approaches that achieve the same result through a different mechanism. We’ve done exactly this in our own research, finding ways to perform operations like in-memory execution and process manipulation that don’t trigger the behavioral rules designed to catch them. Behavioral detection raises the cost of evasion, but with AI in the loop, that cost is still manageable.

Why Architectural Enforcement Is the Most Durable Layer

Architectural enforcement goes further. Instead of trying to detect malicious behavior, it makes certain actions impossible in the first place. The strongest defensive postures will combine all three, with architectural controls as the foundation, behavioral detection as the next line of defense, and recognition-based methods catching what they can on top. Network segmentation means a compromised workstation simply can’t reach critical servers. Least-privilege access controls limit what any user or process can do, shrinking the attack surface an adversary has to work with.

These aren’t detection mechanisms that can be evaded with a smarter binary. They’re constraints on the environment itself. In the current landscape, this is not the level of prevention that most security controls live at. The security cat-and-mouse game we all know and love is definitely favoring attackers at the moment.

The industry needs to shift its center of gravity. While signatures still have a role, and behavioral detection raises the bar meaningfully, the most durable layer is architectural enforcement because it doesn’t depend on detecting the attack at all. The strongest defensive postures combine all three. Architectural controls form the foundation, behavioral detection serves as the next line of defense, and recognition-based methods catch what they can on top.

-

Check Point Research: VoidLink – Early AI-Generated Malware Framework ↑

-

Microsoft Security Blog: AI as Tradecraft – How Threat Actors Operationalize AI ↑

-

AWS Security Blog: AI-Augmented Threat Actor Accesses FortiGate Devices at Scale ↑

Where Praetorian Fits in AI-Driven Offensive Security

This is why we’ve invested so heavily in building these systems. We maintain purpose-built environments where AI-driven systems iterate against production-grade defenses, and we use what we learn to directly inform how we advise our clients on their defensive posture. When we recommend that a client rethink their architectural approach or layer their defenses differently, that recommendation is grounded in having watched specific detection strategies hold up or fall apart against AI-accelerated offensive tooling firsthand.

The same AI capabilities accelerating offense can be applied to defense. The closed-loop workflows we’ve built for offensive testing translate directly into defensive validation, and when paired with attack surface management, they give organizations a much clearer picture of where their defenses actually stand against modern threats.

The landscape is evolving quickly. We’re excited to be pushing the boundaries of what’s possible with AI in offensive security, and we believe the work we’re doing will help shape what effective defense looks like as these capabilities mature. The organizations that invest in understanding this shift early will be better positioned as AI-driven offensive tooling becomes more widespread.

If you’re interested in learning how these capabilities can strengthen your organization’s security posture, get in touch with our team.