Building a security program from the ground up is a complicated, complex undertaking that can pay massive dividends down the road. We firmly believe that “the devil is in the details,” in that the more thought an organization invests in organizing their framework (see Part 1 of this series) and planning how to measure against it (see Part 2 of this series), the greater benefit they will derive from their security program long term. In this third and final part of the series, we discuss how organizations can make meaningful progress toward their established target state(s) by balancing competing priorities. Organizations with effective security programs prioritize recommendations to achieve their target state by balancing the following key considerations:

- Security relevance: denotes the recommendation’s contribution to risk reduction.

- Breadth of applicability: refers to the number of controls a recommendation affects.

- Ease of implementation: describes where a recommendation falls on the continuum between quick wins and longer term initiatives.

The same organizations also recognize that working towards a target state is much more important than continually increasing an absolute score. Instead, they use scoring metrics to answer stakeholder questions and concerns while maintaining focus on their target state.

Determining Actions & Recommendations

We discussed in Part 2 of the series that organizations must establish appropriate target states. Any category scored below the assigned target state should have an associated recommendation or action item. Some organizations may also elect to select a minimum threshold, such as two or below, at which they require recommendations regardless of target state. The most important aspect of this step, however, is to set aside considerations of severity, effort, or score impact and simply document a recommendation for every case that missed its target state. Prioritizing recommendations is a separate task in the implementation process.

Situations wherein an organization cannot determine a realistic and reasonable recommendation may indicate that the current or target state scores are inappropriate. In those cases, the organization needs to adjust the target state, in order to maintain their focus on their security goal. Recommendations should never be made just to satisfy a score requirement.

Recommendations versus Risk Statements

Some organizations prefer straightforward recommendations for actions, while others–most commonly those implementing a strong risk-based approach–find risk statements more appropriate. We can reframe the concept of recommendations as risk remediation activities, wherein the risk they’re addressing is apparent in the threat posed to the organization were they to forgo a given recommendation.

A recommendation regarding a gap should answer the following questions:

- How can we prevent x from happening?

- How would doing this limit the impact if it were to happen?

- To what extend would it limit the impact, were it not to mitigate the risk completely?

Conversely, a risk statement answers the same questions from a different perspective, as follows:

- What could happen?

- What would the impact be were it to happen?

- Why should we care?

When broken down in this way, we see how you could derive similar, yet not identical, information from each approach. We also see that the perspective you choose during this step in the process affects the answers you can provide to others in your organization in the future. Let’s look at an example of this in practice.

Real Life Example

In Part 2 of this series , we defined the following control:

– PR.AC-3.1 Remote Access logs are stored in (log provider or SIEM name)

Let us consider the case where the assessment of the Technology domain for this control yielded a maturity level of two, which we previously defined as “VPN, Endpoint Proxies, Virtual Desktops, or other remote technologies are utilized to facilitate remote access but are largely un-managed or implemented at a team level”. The gap here is that while remote access tools are in place, they are neither fully logged nor audited.

We can state the recommendation the following way: Company A should develop or adopt a centralized logging tool to ensure remote access related logs are available to response teams, which would enable them to set up alerts for remote logins that meet a certain criteria and restrict access if a login from one of these areas were to occur.

Likewise, we could word the risk as follows: Company A does not manage or log its remote access tools, which would limit the ability of its security teams to respond quickly to an incident if someone fraudulently obtained remote access credentials. The end result of this could be an attacker accessing confidential company data and the production environment, costing Company A millions in reputation damage.

Once you have created a list of these recommendations or risks, the next step is to start prioritizing them.

Prioritizing Based On Security Relevance

The goal of any security program should be to reduce the amount of risk the organization must accept. While many frameworks do not directly address risk, any collection of controls and objectives is designed to minimize the risk in a general sense. While the NIST CSF follows a logical flow of events, the Critical Security Controls (CSCs) use implementation groups to indicate which controls are most likely to significantly reduce risk. In the CSC structure, Implementation Group One consists of the controls that will impact risk most directly .

That all being said, each recommendation for achieving the target state for any objective or control also should carry its own risk reduction rating. At a minimum, organizations should assess the risk-reduction impact of a recommendation or action item as high, medium, or low. Organizations with more robust risk management programs may be able to use a more granular risk rating methodology or even begin to assign quantitative values (such as dollars) to recommendations.

The key here is that organizations consider a recommendation’s impact on risk first; however, this is only the first of three metrics organizations must balance to effectively assess recommendations.

Prioritizing Based On Breadth of Applicability

While security practitioners may want to prioritize solely based on risk reduction, as discussed above, other stakeholders–such as boards and executives–may want to prioritize recommendations based on more easily measurable metrics. These might include the overall score of the assessment or the number of controls or objectives that have met the target state. They want to maximize the measurable impact of recommendations with the lowest amount of resources possible.

Keeping this in mind, the most efficient way to achieve score improvement is to prioritize recommendations that apply to the most controls or objectives. Certain single recommendations can increase scores for many controls or objectives. In those cases, the overall score for the organization will increase much more than if it had implemented a recommendation aligned to a single control or objective. The caveat here is that this consideration is independent of the amount of work required or the potential for risk reduction.

This is not the most security-conscious way to approach improvements in security maturity. We must acknowledge, however, that boards and executives want to show significant progress toward maturity based on the money they invest in the program. Even so, striving for raw score improvement is not a winning strategy.

Prioritizing Based On Ease of Implementation

We would be remiss if we did not acknowledge that some organizations are simply going to want some quick wins. For highly resource-constrained organizations, quick wins may be the only work they can prioritize in short order. In other cases, easy-to-implement recommendations may be perfect for employees to tack in between projects and tasks. While these recommendations are not likely to create significant increases in scores, they can help an organization show progress through the number of completed tasks.

Organizations utilizing this consideration need to define the level of effort at an organizational level for each recommendation under consideration. They can factor in the time they estimate they will need to implement a given recommendation, the number of teams they require to do the work, and the tooling cost (if any) to arrive at an understanding of the comprehensive level of effort for each recommendation. A subjective High, Medium, and Low scale may be sufficient in many organizations.

In some cases, high-risk-reducing recommendations may also be easy to implement, making the prioritization simple. In other cases, the risk reduction and effort both may be low, and prioritization may be more difficult.

Putting It All Together

Because no single metric should drive priority alone, organizations should balance all three metrics to determine an overall recommendation priority. To calculate a “Total Priority Metric” that accounts for all three of the prioritization constructs above, organizations should assign a scale for each metric along with specific criteria that create a reasonable number of High, Medium, and Low priority recommendations.

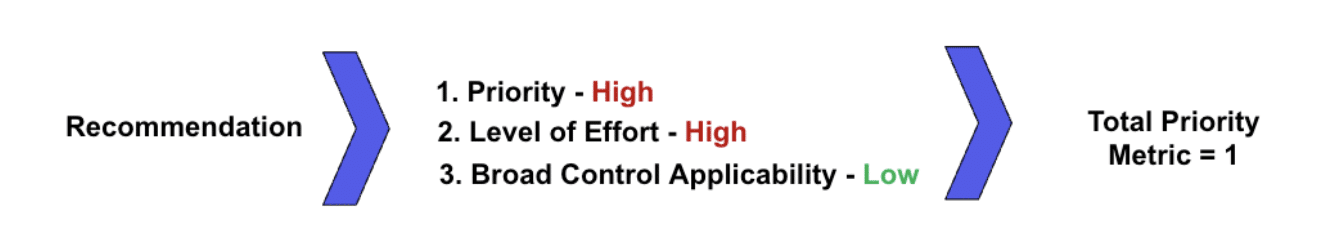

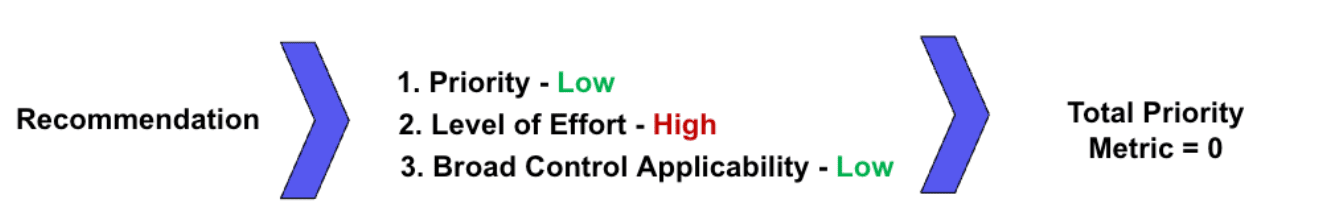

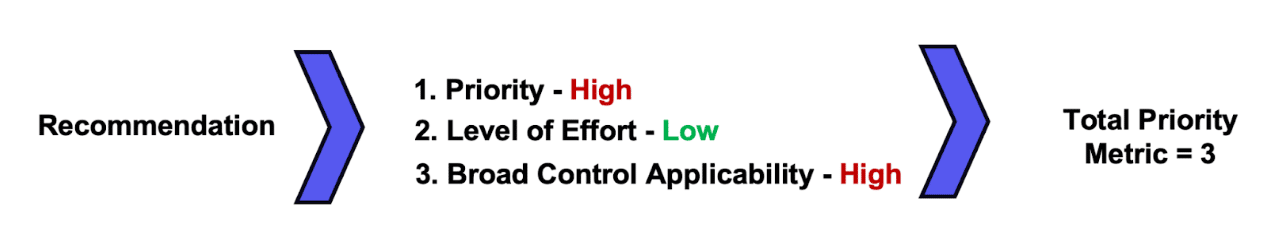

In Praetorian’s implementation, the risk-based metric is simply High, Medium, and Low. When a recommendation receives a High priority label for this metric, we add one to the overall Total Priority Metric.

For the breadth of applicability metric we simply count the number of aligned controls or objectives. In most cases, we have found that if a recommendation aligns with three or more controls or objectives, we should add one to the overall Total Priority Metric. We do increase this numeric threshold, though, if we find that more than 20 percent of the recommendations fall into the three-or-more category.

The ease of implementation metric receives a subjective rating of High, Medium, and Low in our implementation. Organizations could easily quantify it as something like Low = <30 days, Medium = 60-90 days, and High = >90 days, or utilize story points in an Agile organization. Any recommendation assigned a Low level of effort adds one to its Total Priority Metric.

Assigning Priority Levels

By calculating the Total Priority Metric this way, we can then assign an overall High priority to all recommendations with a Total Priority Metric of 2 or 3, Medium for a metric of 1, and Low for a metric of 0. Figures 1-3 provide examples of each Total Priority Metric level. A recommendation that an organization expects will reduce risk significantly, apply to many controls, and be easy to implement would take priority over a recommendation that they expect to reduce risk dramatically but only applies to one control and is hard to implement.

Figure 1: An example of a recommendation with a high Total Priority Metric

Figure 2: An example of a recommendation with a medium Total Priority Metric

Figure 3: An example of a recommendation with a low Total Priority Metric

Target States Must Remain the Goal

Many organizations will be tempted to ignore their target states and strive for consistent year-over-year overall score improvement. Praetorian strongly cautions against this approach for two primary reasons. First, the law of diminishing returns holds as true in security programs as it does anywhere else. Second, we assume the effort required to achieve the top score in any control or objective to be exponential, meaning that the effort to move from a 1 to 2 two will be significantly less than moving from 4 to 5.

Chasing an ever-increasing score will lead to disappointment when the resources required to improve the organization’s overall score become cost prohibitive. To correctly manage resources and balance security, risk, and operational efficiency, organizations should strive simply to meet their target states and continue adjusting them over time as new information becomes available.

Additionally, organizations should not be disheartened when their overall score fluctuates at higher levels of maturity. Praetorian finds fluctuation and regression acceptable from year-to-year in high-performing and mature organizations. Less mature organizations should see consistent increases in the beginning of developing a security program, but all organizations will and should plateau. New threats, regulations, risks, and a changing environment can all impact scores and may require remediation that was not previously necessary.

Conclusion

Architecting a mature, measurable, and useful security program is no easy task. First, organizations must define what framework(s) they want to utilize, and then they must develop a scoring and assessment mechanism to measure themselves against the chosen framework. Lastly, they must develop a way to prioritize the recommended work that will enable them to achieve their desired level of maturity, or target state. Many organizations will have entire departments dedicated to these tasks, and others will ask staff to tackle these tasks as additional duties.

The time, thought, and effort an organization puts into these three steps will yield prioritized recommendations and action items. These become the framework for growing and maintaining a successful strategic security program from year to year. Without this sort of prioritized roadmap, organizations will risk misallocating resources, will see a limited return on security investments, and will end up with a security program that lacks direction.