Last week we published a new release of Nosey Parker, our fast and low-noise secrets detector. The v0.14.0 release adds significant features that make it easier for a human to review findings, and a number of smaller features and changes that improve signal-to-noise. The full release notes are available here.

Release highlights

File names and commit information for findings from Git repositories

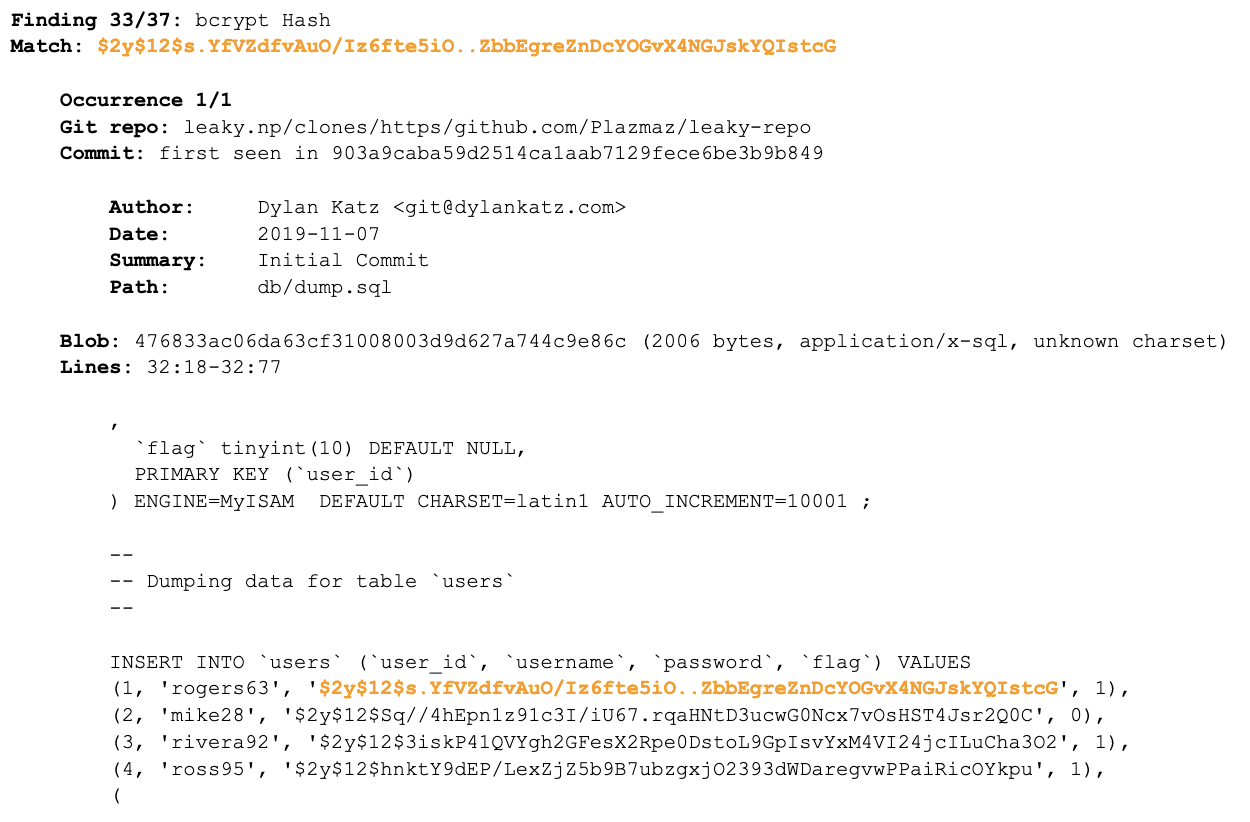

The most significant update, in terms of user impact and developer effort, is that Nosey Parker now by default collects and reports filenames and commit information for findings from Git repositories. Specifically, the noseyparker report command now provides the commit(s) and pathname for matches that it finds in files from Git repository history.

An example of this information in Nosey Parker’s default human report format appears in the Figure. In particular, notice the Commit section, which includes the commit author, date, commit message summary, and the file path. (Note for the diligent: the input repo we scanned for this example does not contain real secrets; it is a demo repository that is intentionally full of fake secrets.)

Figure: A sample of Nosey Parker’s default human report format.

Pain Point: Human-Friendly Data Output at Speed

Prior to this release, all that Nosey Parker reported in these cases was the repository path and blob ID (the 40-character Git hash of the file). That information was enough to uniquely identify the file, but not enough to make it easy to further investigate the finding or discuss it with a client. In fact, one of the most common questions from new users was something like, “How can I get Nosey Parker to tell me the file name and commit date?”

This feature was missing prior to this release not because we didn’t understand its utility, but because it’s actually tricky to collect this information efficiently. Unlike most other secret detection tools which enumerate Git commits and scan the files referenced in each one, Nosey Parker enumerates Git’s blobs directly, scanning each one once. This approach is asymptotically more efficient (and part of what makes Nosey Parker so fast), but it means that file names and commit metadata needs to be reconstructed after the fact. This is challenging to do without dramatically slowing down scanning!

How We Solved It

To solve this challenge, the v0.14.0 release of Nosey Parker includes a possibly novel graph-based algorithm for reconstructing file name and commit metadata for all blobs found within a Git repository. The big idea is to build an in-memory representation of the inverted Git commit graph when scanning a repository, then traverse that in topological order, determining for each commit the set of blobs that were first introduced there, with respect to all other commits in the repository.

The implementation uses an adaptation of Kahn’s algorithm for topological traversal, combined with a priority queue ordered by commit node out-degree. The priority queue approach heuristically minimizes memory use by keeping the work list of nodes that still need to be visited small. Additionally, the implementation uses data representations that provide good CPU cache behavior and constant factors, such as compressed bitmaps to keep track of which blobs have been seen at each commit.

The end result is that Nosey Parker now determines for all blobs found within a Git repository the commits in which they first appeared, typically with less than 20% runtime overhead. This cost is well worth the additional information it allows Nosey Parker to report.

Ability to guess file types

Nosey Parker now reports its guess for the MIME type of each file with findings. This is evident in the Figure, where we see (2006 bytes, application/x-sql, unknown charset) on the “Blob” line. This extra metadata can help human reviewers more quickly determine if a finding is worthy of additional investigation. For example, something appearing within an application/x-sql file (possibly a SQL database dump) is likely to be more interesting than the same type of finding appearing within an application/x-markdown file (probably a README file with example credentials).

The implementation of file type guessing in this release uses a lookup table of file extensions to MIME types. This runs with negligible cost, but is imprecise. A more precise implementation of file type guessing based on libmagic (the guts of the venerable file tool on most systems) also is available in this release, but behind a compile-time libmagic feature flag, as it significantly slows down scanning speed, complicates the build process, and pulls in a large amount of unhardened parsing code written in C. This is an area to revisit in the future.

More regex-based detection rules

The open-source version of Nosey Parker uses regular expression matching as its detection mechanism. This release adds six new rules that detect the following four kinds of things:

- HuggingFace User Access Tokens

- Amazon Resource Names (ARNs)

- Amazon S3 bucket names

- Google Cloud Storage bucket names

Notably, the last three kinds of things are not secrets, so why add rules for them? Our security engineers requested them in support of our offensive security engagements with clients. ARNs and bucket names can be useful to attackers for target identification, and our engineers use the same techniques that malicious attackers use.

These rules are less useful for defensive applications of Nosey Parker. For a future release, we are investigating the addition of a more expressive mechanism for choosing which rules to use (#51), and may split the default set of rules into multiple rulesets to better support different secret detection use cases.

More command-line options; more sensible default values

Nosey Parker now includes a number of additional command-line options for scanning, and has improved default values of some options.

First, by default, the contextual snippets around matches are now twice as large, up to 256 bytes before and after. This typically results in four to seven lines of context in the snippet before and after each match. This change has negligible impact on performance, but makes for easier human review of findings. Users can override this setting with the new --snippet-length=N option.

Second, the new default method that Nosey Parker uses to clone Git repositories reduces the amount of out-of-scope content it scans when using the automatic Git repository cloning options. Repositories are now cloned using Git’s --bare option instead of --mirror'. Users can override this setting with the new --git-clone-mode={bare, mirror} option.

Further context behind the choice of cloning method

The “mirror” cloning mode that was previously the default copies all git refs, whereas “bare” only copies branch heads. The “mirror” cloning mode was initially the default because it can expose additional content (reported by others in 2022 as “GitBleed”). However, repositories hosted on GitHub store the content of forks within the main repository (!), which means a --mirror clone of a main repository effectively scans all forks as well. For popular projects, most of the forked repos are out-of-scope during an engagement, so not scanning that content improves signal-to-noise in most cases.

Future work

This is only the third official release of the open-source version of Nosey Parker, but it brings significant improvements and new features. The work is not complete, however! Possibilities for future development include adding native support for enumerating additional input sources (containers, archive files, PDFs, etc.) or improving the reporting capabilities.

You can help improve Nosey Parker! Try the tool and let us know your experience or cool things you’ve done with it in the GitHub discussions page. If you run into problems, please let us know by creating an issue. We would also welcome the community contribution of additional high-precision regular expression-based rules; we have provided a dedicated documentation page about that. Happy hunting!