Which Came First: The System Prompt, or the RCE?

During a recent penetration test, we came across an AI-powered desktop application that acted as a bridge between Claude (Opus 4.5) and a third-party asset management platform. The idea is simple: instead of clicking through dashboards and making API calls, users just ask the agent to do it for them. “How many open tickets do […]

AI-Driven Offensive Security: The Current Landscape and What It Means for Defense

The capabilities of modern AI models have advanced far beyond what most people in the security industry have fully internalized. AI-generated phishing, script writing, and basic offensive automation are getting plenty of attention, but what happens when you apply agentic AI to the full lifecycle of building, testing, and refining custom malware and command-and-control (C2) […]

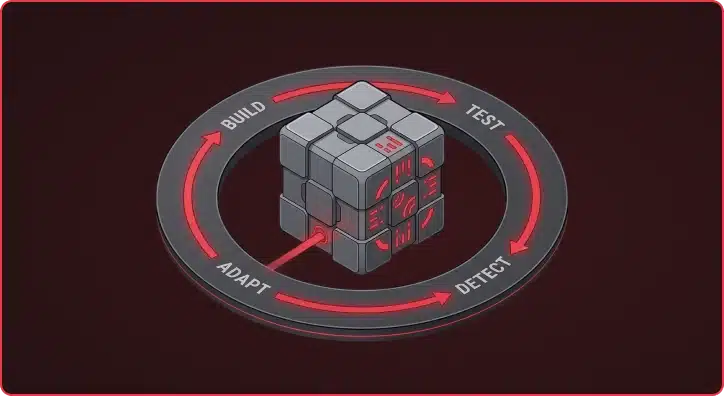

Augustus v0.0.9: Multi-Turn Attacks for LLMs That Fight Back

Single-turn jailbreaks are getting caught. Guardrails have matured. The easy wins — “ignore previous instructions,” base64-encoded payloads, DAN prompts — trigger refusals on most production models within milliseconds. But real attackers don’t give up after one message. They have conversations. Augustus v0.0.9 now ships with a unified engine for LLM multi-turn attacks, with four distinct […]

Mapping the Unknown: Introducing Pius for Organizational Asset Discovery

Asset discovery is an essential part of Praetorian’s service delivery process. When we are engaged to carry out continuous external penetration testing, one key action is to build and maintain a thorough target asset inventory that goes beyond any lists or databases provided by the system owner. Pius is our open-source attack surface mapping tool […]

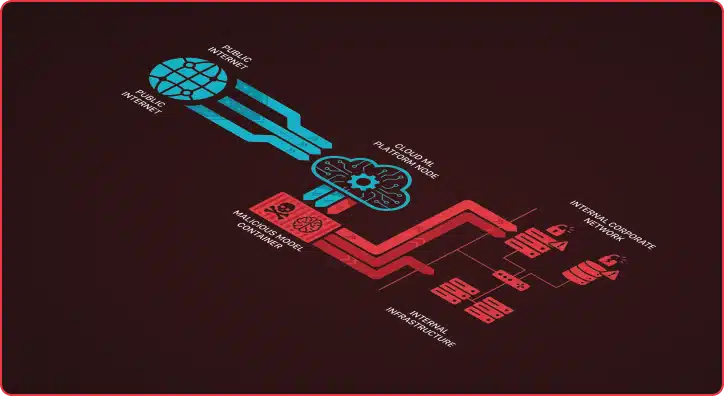

Beyond Prompt Injection: The Hidden AI Security Threats in Machine Learning Platforms

What’s the first thing you think of when you hear about AI attacks and vulnerabilities? If you’re like most people, your mind probably jumps to Large Language Model (LLM) vulnerabilities—system prompt disclosures, jailbreaks, or prompt injections that trick chatbots into revealing sensitive information or behaving in unintended ways. These risks have dominated headlines and security […]

Building Bridges, Breaking Pipelines: Introducing Trajan

TL;DR: Trajan is an open-source CI/CD security tool from Praetorian that unifies vulnerability detection and attack validation across GitHub Actions, GitLab CI, Azure DevOps, and Jenkins in a single cross-platform engine. It ships with 32 detection plugins and 24 attack plugins covering poisoned pipeline execution, secrets exposure, self-hosted runner risks, and AI/LLM pipeline vulnerabilities. It […]

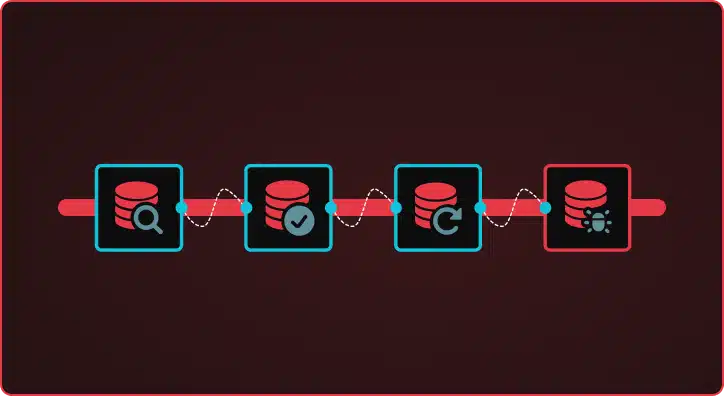

How AI Agents Automate CVE Vulnerability Research

The CVE Researcher is a multi-agent AI pipeline that automates vulnerability research, detection template generation, and exploitation analysis. Built on Google’s Agent Development Kit (ADK), it coordinates specialized AI models through four phases — deep research, technology reconnaissance, actor-critic template generation, and exploitation analysis — to produce production-ready Nuclei detection templates overnight. Beyond Simple Automation […]

What’s Running on That Port? Introducing Nerva for Service Fingerprinting

Nerva is a high-performance, open-source CLI tool that identifies what services are running on open network ports. It fingerprints 120+ protocols across TCP, UDP, and SCTP, averaging 4x faster than nmap -sV with 99% detection accuracy. Written in Go as a single binary, Nerva helps security teams rapidly move from port discovery to service identification. […]

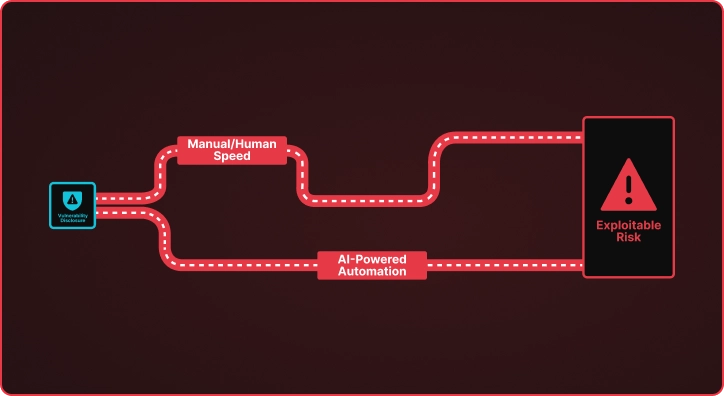

AI-Powered CVE Research: Winning the Race Against Emerging Vulnerabilities

The Vulnerability Time Gap When CISA adds a new CVE to the Known Exploited Vulnerabilities catalog, a clock starts ticking. Security teams must understand the vulnerability, determine if they are exposed, and deploy detection mechanisms before adversaries weaponize the flaw. This process traditionally takes days or weeks of manual research by skilled security engineers who […]

There’s Always Something: Secrets Detection at Engagement Scale with Titus

TL;DR: Titus is an open source secret scanner from Praetorian that detects and validates leaked credentials across source code, binary files, and HTTP traffic. It ships with 450+ detection rules and runs as a CLI, Go library, Burp Suite extension, or Chrome browser extension — putting secrets detection everywhere you already work during engagements. Say you find […]